Hive Punks Update

What a day! I want to appoligize for the issues today, I can't say sorry enough for the events today.

I will take a moment to expain what happened, what went wrong, what didn't and how things are going.

As I said in our talk, Punks on Hive is built on Hive Engine using Hive Engine NFT functionality along with IPFS. IPFS if you are not familiar, is a decentralized storage system but it can be slow. That being said, IPFS is the defacto standard for NFTs and storing media assets and metadata.

Knowing about these issues, I planned ahead to IPFS to stamp on the NFT for posterity purposes the media asset (aka image), metadata (attributes), and transactional data. This data is available on every NFT, and can be viewed using Hive Engine if you know how to do this.

The problem is IPFS is slow, so I moved this data to a third party system (Postgres database) on Supabase. This is an open source Firebase clone. I did a ton of testing prior to launch and even pushed beyond the max distribution of Hive Punks with Real-Time Rarity enabled and performance was amazing. Despite how complicated it is to calculate rarity on the fly, it was working great.

When Punks on Hive launched, the first 1,500 Punks went really well and everything happened within seconds of them being minted. Then Supabased started to act up, it kept freezing, and would allow for a few inserts then went offline for 5-15 minutes. This happened shortly before I went on stage at Hivefest.

The problem go worst as time went on, not only were the delays longer, it was getting further behind on the incoming minting. The minting of Punks didn't slow down and 25% of the total supply was minted in just a couple hours of launch and before I even got on stage but the problems with just kept snowballing.

After some digging, I found the problem was related to uploading image asset. The process Punks on Hive uses is to immediately generate the attributes, image, and metadata and store it on IPFS, then take that IPFS folder and stamp it to a freshly minted NFT on Hive Engine. Another process monitors Hive Engine for new PUNK NFT tokens, and then takes the IPFS data and pulls the image and stores it in a image bucket for fast access and take the metadata to populate a database. This process ensures the IPFS data only has to be accessed once and can eliminate the issues with performance regarding IPFS.

The problem is Supabase kept going down during this process, even those these image files are only 2KB and the metadata and database inserts are equally tiny, the entire system would go down after 2-10 inserts. About 15KB-30KB of data, around the size of a typical email message without images. After repeatedly trying to find a solution and prevent these outages, I realized if I stopped using them for image storage, the problem was less extremely. It was still bad and unreliable and with no solution currently available, but it allowed the process of extracting and update metadata to move forward.

A few hours ago I gave up trying to upload images to them, and started using Backblaze B2 to store the images and had it process all images generated so far in parallel with the metadata catching up The images uploaded and got caught up in fairly short time (they are tiny after all) but the metadata still lags behind by quite a bit. During this process, every single Hive Engine block has to be downloaded and analyzed, which is a very slow process when you fall behind but something that can be done in real-time when you are caught up.

Every time I would start catching up, Supabase would crash or become non-responsive.

At this point, it is still catching up and is 8400 blocks behind. Everyone who minted an NFT received it almost instantly, the Hive and Hive Engine side of things worked well and was quite successful.

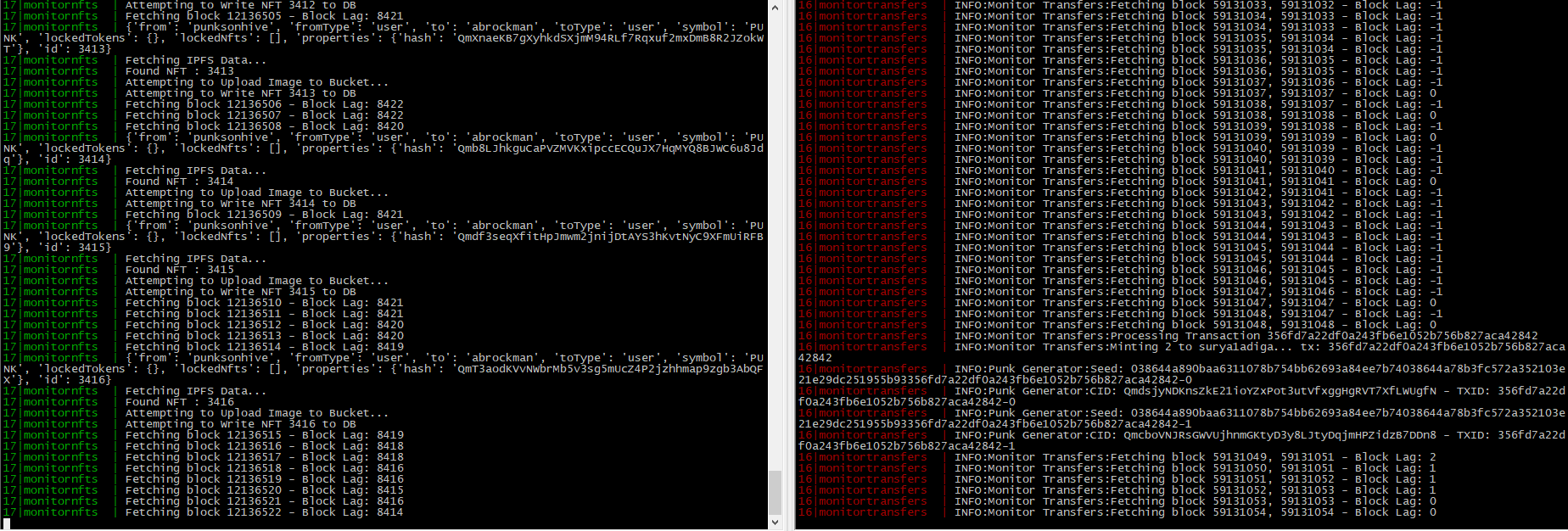

As you can see here on the right side, it is caught up and processing incoming transactions and quickly turning them into NFTs, even storing the IPFS data and stamping the NFTs only seconds after they are minted. On the left side you can see the process of indexing Hive Engine blocks and extracting the NFT data into Postgres database and S3 image buckets is far behind.

Throughout the day I tried all sorts of things to eliminate the issues with Supabase, even before the problem I had artificial delays of around 1 second per mint to assure nothing gets rate limited or hammers any resource too hard regardless of how many transactions happened. I fully expected any rush would cause it to fall behind, but it would quickly catch up during the dips between. There were no dips, but even so it would have had no problem keeping up if it didn't keep crashing. It was only when I stopped using their image hosting was it even usable.

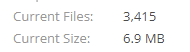

To put things in perspective, 3,415 images only takes up 7MB. This shouldn't remotely be a problem. I even updated to Supabase's Pro tier despite the fact I wasn't even remotely near their usage limits.

Right now I have a process that pulls down the entire database of every punk, scans for any of them that I don't have an image stored from them and uploads the image to B2. The Punks front end has been updated to serve images from B2. This allowed it to come back to life, but the metadata is still stored on Supabase and anytime it has a hiccup, it causes the site to act up as well. Right now I'm trying to let it catch up so all the minted NFTs can be entered into the database. The entire database is currently around 1.5, small enough to nearly fit on a floppy disk.

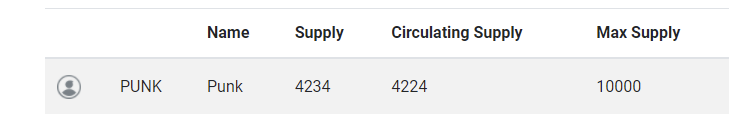

Once the process is done, and everything is caught up, I will look into other options. Unfortunately a large portion of the site was built around Supabase, and I can't just rip it out in five seconds. Also, almost 50% of the Hive Punks have been minted, we are currently at 4,208 out of 10,000 Punks minted. Once all 10,000 Punks are minted, a database isn't even needed, all the metadata can be stored in a JSON or CSV file like most NFT projects do. All the images still need to be hosted somewhere so no one has to deal with IPFS problems.

One observation throughout this, the Hive blockchain and even Hive Engine performed well and had virtually no impact despite the massive amount of transactions done today. Most blockchains would have been on their knees trying to process 4,200 NFTs in half a day.

I can't express how sorry I am about how this transpired. I've been up for a couple days now preparing a great experience for HiveFest and do something no other NFT project attempted, and it was all thwarted, not by the blockchain, but by a third party service. What really kicks me in the gut is how well everything worked during the 2-3 weeks of development and not a single problem showed up even with 10K+ Punks on testnet and how pathetically small this dataset is to even be a problem. I could literally buy a box of floppies and store all the images and metadata for the entire project if every single one was minted. I know part of the problem is so many people viewing so many of these tiny images at once. I had plans to limit how many you can view at one time, but this broke the ability to see Punks update in real time.

As of right now, 4,234 Punks have been requested, and 4,234 Punks have been minted.

The metadata extraction is about 7,800 blocks behind, and trying it's best to catch up. Things have been fairly stable for the last hour but looks like the database needs another reboot. Still 1 hour is far better than 10-20 seconds most of the day.

I am able to keep up pushing the images as fast as they get entered into the database. If the database doesn't keep crashing, it will catch up, but every time it crashes it sets it back a bit.

I hate that this has put a dark cloud on what I think is a really cool twist on something that was was done very differently on other chains and most couldn't even handle doing it this way and it wasn't the chain that failed us.

UPDATE: The more I think about it, the more I think I know the problem.

The database is silly small, even with 10K punks it is likely only like 5MB, the problem I believe lies in the fact I took advantage of Supabase's real-time features, which really can't handle hunreds or thousand users updating frequently, even when the data is tiny. I believe if I never tried to do real-time updates, it would have never been a problem. The problem wouldn't exist if I generated all 10K ahead of time like all NFT projects I know of. You would think a floppy disk worth of data, wouldn't be a major problem for a service promoting "real-time updates" with millions in funding.

With this in mind, I turned on Infinite scrolling and disabled real-time updates, you will have to hit F5 now to see updates. If my theory is right, this should resolve the issue allow things to catch up.

UPDATE 2: Been on the phone with Supabase and looks like someone has been attacking the instance trying to brute force it. Research ongo

Congratulations @blockheadgames! You have completed the following achievement on the Hive blockchain and have been rewarded with new badge(s):

Your next target is to reach 1750 upvotes.

Your next target is to reach 100 comments.

Your next target is to reach 300 replies.

You can view your badges on your board and compare yourself to others in the Ranking

If you no longer want to receive notifications, reply to this comment with the word

STOPTo support your work, I also upvoted your post!

Check out the last post from @hivebuzz:

I bought quite a few after seeing a post from @gungunkrishu , without even knowing how to see them or where to see them. May be I will catch up later and find out all the details, but I hope everything is safe and we won't loose anything.

You won't lose anything, in fact you already have them. It's just the website hasn't caught up. All the NFTs are in your possession and have the properties stampped on IPFS.

Great, when can I see them ? And how many total I have ? Let me know, once you are free.

I think, you're beating yourself up too much. A couple of hiccups on the first day of potentially one of the best projects yet is no biggie in my book.

In any case, I just woke up (it is 8 a.m. in Germany) an hour ago and my two punks were there.

Thank you for explaining in details, so we all understand the intricacies behind Hive-Punks. It is interesting to see, how things work on the backend side. Never even thought of it, to be honest.

Have a nice weekend.

completely agree with this. You've launched an amazing and ambitious project that is really exciting for the hive nft ecosystem. Before the crashes, the live updating rarities was working beautifully and it was such a thrill to see my punks continually changing order, and to watch the details updating. Im happy that the actual minting hasnt been a problem and that it is just the image loading that is causing the trouble, this gives me confidence that the project will overall be a success. Congratulations and thankyou for all your hard work. p.s. I have some generative nft ideas that I would love to talk to you about, especially in regard to initial rarities and live updating rarity, so let me know if you're up for having a chat sometime. x basil

You're fine, mate. Forgive yourself, we'll wait.

You'll sleep well tonight :)

Congrats on the successful launch, despite the software hiccups. Selling almost half in less than a day is awesome. I bet it will cross 5000 sold before the announcement post is 24 hours old.

I bet, they are almost sold out already ^^.

It’s all cool, we all understand that things like this do happen some times. Glad I was fortunate to mint mine when I could with the help of a friend. Good work, we appreciate you.

Interesting how the legacy DB crap is swamped and the blockchain is fine!

BTW we really need a way to link to a specific Punk.... but you know this!

Wow just had a look at @therealwolf @inji and he's got a great page already but the images are broken.

https://inji.com/@brianoflondon/nfts?t=hive

https://inji.com/nft/punks-on-hive/QmeoNhLRT9nb7M3yFiZWMfPPCknDRR7HnqWm8Qwg41KeRS

Working on a fix. Will be deployed shortly. 😃

Posted via inji.com

I got a mine eventually and I didn't have a doubt that I won't. Thanks so much for this awesome project!

U did well, the 1st to test it out will always find problems that haven't been addressed before.

Please make some exclusive things that only hivepunks has access to :)

Posted Using LeoFinance Beta

Can't get logged in sadly..no hivesigner access for Android users ???

Np mate, 21 NFTs minted 21 NFTs in my pocket ;)

Yesterday i bought one punk and after 50 minutes he arrived. Today i bought my second one and i am still waiting. But there is no problem. I can wait.

The main thing is that they arrive.

And for the first day, that was already very successful. A few teething problems were certainly to be expected.

I am satisfied.

You actually have it, just the metadata and site is till behind.

Thank you for that great project 👍

I think we can forgive you, I love this kind of technical analysis shit!

!PIZZA

PIZZA Holders sent $PIZZA tips in this post's comments:

@sharkthelion(3/20) tipped @whatsup (x1)

renovatio tipped blockheadgames (x1)

d-zero tipped blockheadgames (x1)

revisesociology tipped blockheadgames (x1)

Join us in Discord!

lol, I'm late at party. more than 10K would have been great because hivers buy anything :D

thank you for this. i love reading these. i think you did amazingly, especially because people were trying to take the instances down! all good, onwards and upwards.

Posted via inji.com

Hey marky, I minted a few of them and so far I can't see them (I assume they're already minted). I also saw that I have a transaction without the 'empoderat' memo. Could you check if everything is Ok from your side? (when you have a bit of spare time, not ina hurry ofc).

thanks in advance.

The minting process is nearly instant, the updating DB is lagging, but a lot of changes have been made in the last few hours and it is catching up.

Don't worry about that transaction, the name is optional it will default to sender if not specified.

Perfect, thx

Congratz and keep it up with that work. This is awesome and I'm loving it :)

Got my 2 Punks - maybe a stupid question but rarity score means what? The higher the score the more rare or the other way round?

yeah, high is good.

Posted Using LeoFinance Beta

make sense - thanks lady :-)!

Strange I had these two

Today only one left in my gallery, the other one is invisible but probably not sold as well.

Thank you for sharing. I just minted 1 using some of the sales from my NFT's on @nftshowroom

Very happy to be part of this project.

How do I know if my minting is successful or not? I think I have sent a wrong transaction, can you please have a look and tell me if it's successful https://hiveblocks.com/tx/8e50ce64383bd8e13ea7632afc941278a95cf434

You have been refunded.

Not that bad I think, don't be too hard on yourself. Funny thing is I can see the punk on my mobile keychain now but not on desktop browser? Should I just wait longer?

Simple yes or no will suffice, I take it you're busy these days ;-)

update: nevermind, just realized anything on the market is removed from the gallery

What a great project! I've never bought an NFT before, maybe a punk can be my first! :)

That sucks to hear..... I really hope things will get better and you'll be able to do well. People should seriously stop with all these attacks. These scumbags aren't helping the world with their antics.

!PIZZA

Posted Using LeoFinance Beta

Hi, can you please check my transaction? Yesterday I bought 5 Punks but only 1 was delivert. Today (1 h ago) I bought 1 Punk and he is delievert now. Dose the other 4 are lost?

also the ID is much higher from the delivert today. Could you please check I dont wanna miss to buy new Punks if there was a problem with the first buy yesterday. Also I would be nice to see what punks I have to decide if i need more.

I think the punks are so cool that I immediately loosened up another 20 hive and ordered a third one.

If I press send on the character card, can I send the punk to another hive user? Would be a nice Christmas present for a friend ;)

Yes, you can send cards to others.

Thanks for the update! Looking forward to any new things (if any) we do with these!

A few things to report with the website: I minted some 10 hours ago and the page seems to update to showing "Gallery (9)" but reverts to 4 when going to the gallery page. I tried hard refreshes CTRL+F5 and it still does this. I tried to log out and it does not let me log out. Using a different browser that had previously went to my gallery while not logged in, I get the same issue: Shows 4 and there's nothing I can do to get it to show 9.

Viewing user galleries in a private window or while not logged in still requires a refresh after loading. Otherwise it shows it as blank.

Not sut sure what is happening when in private mode, I looked and noticed same thing. It's like the backend query doesn't run properly. Decided it wasn't a critical problem for launch.

The count was added a quick thing yesterday, but when I removed real-time subscriptions it likely caused a few things I need to address.

My 9 punks are showing up in the gallery when not logged in but in another browser, logged in, I can't seem to get the new 5 ones to show or log out. Looks like the only solution is manually deleting site data and logging in again.

After doing that, I now see the 9 I should see in there. It would be nice to see the one I have up for sale there too, now that I think about it.

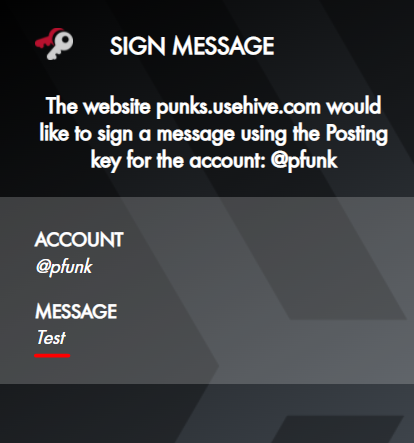

Also, tiny little thing not worth changing now, but logging in you still sign a message that says "Test"

Have you tried Control F5, could be a cache issue with beta, I had to do that so it didn't try to use old data.

You can find yours on the market, but I have some plans for changes, I had to limit the functionality to make sure I can have a launch for Hivefest.

It's just a temp message to verify keychain, but I changed it and will push it out today.

Can you check dev console, you getting a message about Vuex?

Yes

Yes the storage of all nfts exceeded browser storage. I am working on a quick fix prior to entire database turning into static file.

Another point of feedback, I didn't notice it but the punk I put up for 500 HIVE got sold and it seems I got paid 450 SWAP.HIVE. A 10% fee is fine but that should be presented up front (like on the sell dialog), as that's the first I saw of a fee. Mentioning it gets paid in SWAP.HIVE would also be useful, as not everyone would think to check that.

It is on the bottom of the page under the FAQ. It is similar fees as NFT Showroom. I don't think they mention it either as I got the same shock. When I catch up fixing other things, I will try to find a way to list it in another place that is easier to see.

I'm going to buy many of this punks and leave it for few years to appreciate. I believe is going to bull more

Posted Using LeoFinance Beta

Howdy, I think I minted some and the payment definitely went through. I can see the number in gallery but not the pics.

After reading this, basically is a matter of time and I can go to sleep safely? lol

Awesome job btw. This things are ... Well, we all are early ^_^

LMK, and thanks!

Renovatio

!BEER

!PIZZA

Thanks for the detailed information , wow , i am really impressed and sorry to bother you on discord i know your really busy. I'll be patient and wait for everything to catch up

I just bought one and I don't see anything in my gallery. Even the gallery is not visible anymore after refreshing the page. What went wrong?

I am working on a site update, the amount of punks exceeded local browser storage, I did not expect them to sell out in 2 days.

okay, thanks for explaining! NFTs are hot you know ;)

Thanks for the update. The Hive Community has the most advanced Punks (technically design, process) in the entire blockchain space. Other High performance, zero fee, DPoS chains like TELOS have a decentralized storage solution (dStor). Maybe you could check. https://dstor.cloud/

Congrat for your work.

Chill Marky all excellent launches have issues.

Remember coinbase breaks every pump.

I think it was a huge success, don't stress

Posted Using LeoFinance Beta

I'm just trying to be transparent, but also share some of the process. I'm good, just tired.

I do appreciate the explanations and the acknowledgement that people may be a little frustrated with the site, even though I don't place any blame or hold any grudges. I am glad to have been able to take part in what seemed like an extremely successful launch in most ways. 10k of them gone in 2 days?! Wow.

!PIZZA

I tried to buy one to be one of the cool kids, one from @whatsup in particular because I know she is a cool kid. It said the transaction was broadcast but nothing happened. Out of my frustration I proceeded to sell all of @whatsup's punks without having bought them. The transactions were all broadcast, but I imagine those didn't work either.

I believe I failed to become a cool kid on two accounts.

Let me know if you fix this so I can become a cool kid. I will promptly go sell all of @whatsup's punks without having bought them, and then buy them from myself.

You need SWAP.HIVE for the market as it is Hive Engine and it can't work with Hive directly.

Ah thanks. And sorry I cheated on you @whatsup. I found the female punk version of myself. I spent all my money on dinner with her. She was cheaper and rarer all at once.

Wow, that is crazy. I really admire those that can create awesome things on Hive. The Hive Punks NFTs I know are super popular with my Tribe The CTPSwarm.

Unfortunately, I am kind of new to Hive so I was unable to take advantage of Hive Punks.

Great job in figuring out the problem.

What makes all the difference is that on Hive we know you, and we can talk to you.

I felt that the temporary disappearance of my punks was handled well, and I did not even get a chance to be nervous.

Posted Using LeoFinance Beta

That is one of the cool things about Hive, it has "Community Built-In". Take a project like Splinterlands, imagine that on Ethereum? Who would you talk to? Who would you play again? Who would you discuss strategies and market with? There isn't anyone there, there is no community, just a bunch of wallet addresses.

I had a few instances here when the Gods themselves came down and answered my lowely questions ;)

I have a question, will there be some kind of market survey in the future? I would be interested to know which punks are traded there and how.

The story with the 5,555 hive for one punk is already a mega success for the project.

I do plan to add more things to the market, and set up a market history.

That's fantastic. Thank you!

Congratulations @blockheadgames!

You raised your level and are now a Minnow!

Check out the last post from @hivebuzz: