Was this post WRITTEN BY MACHINE? New Harvard AI Can Recognize That

Inctroduction

I have recently read a not very favorable book review:

Tom Simonite does not keep it simple. He doesn't give you enough info on a subject to make the reading of the book enjoyable. He has over 400 pages of footnotes, so that is a way of getting your work for a subject out of the way. This book was so depressing to me, I can't even talk about it without feeling like I want to punch the kindle.

There would be nothing special and interesting about this review, except the one fact: it was written by Artificial Intelligence.

Nowadays, AI is able to generate a complex and elaborate texts, that can convince human that they were written by another human. This creates a dangerous potential of generating fake news, reviews and social accounts. It also poses a threat to the economy of Steem Blockchain, which I already mentioned in one of my previous articles.

Source

Live by the sword, die by the sword

What should we do to prevent such abuses then? It may sound ridiculous, but developing another AI is a decent solution.

Researchers at Harvard University and the MIT IBM Watson AI Lab have developed a new AI tool called Giant Language model Test Room (GLTR), which is designed to detect patterns characteristic for machine-generated text.

When generating a text, AI usually depends on statistical data and chooses the most encountered and matching word, not focusing on a particular meaning. If the text contains many predictable words, it was most probably written by the machine.

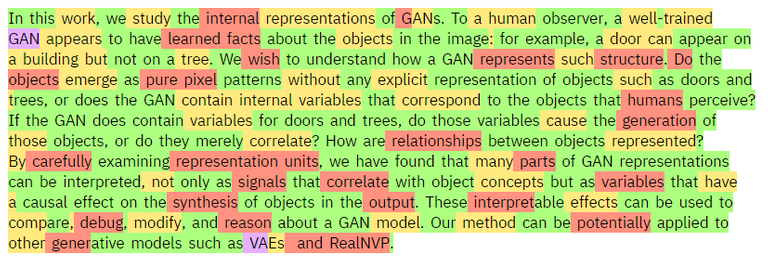

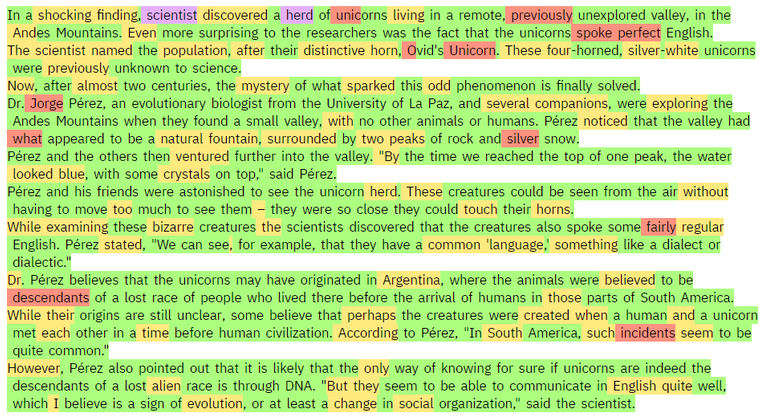

GLTR evaluates each word and highlights it according to its probability. Green indicates the most predictable, purple the least ones.

Text written by human:

Text written by AI:

In order to test the efficiency of GLTR, Harvard students were asked to determine whether the given texts were generated by machine or human. Without using the tool, they were able to properly define only half of texts. With the help of GLTR, their efficiency increased by 75%.

Out of curiosity, I tried to scan my own publications from Steem and this little experiment confirmed my belief that I am a human :)

Share your view

Do you think this is a correct way of fighting with the inappropriate implementation of Artificial Intelligence? I can't get rid of an impression that this is a kind of vicious circle leading to nowhere. Additionally, I think this is only a temporary solution, as in the future machine-generated text will be so diverse, creative and non-linear that it will be impossible to spot it by analyzing it statistically. Unfortunately, GLTR and similar algorithms have not many possibilities for further improvements.

Here you can test GLTR for free.

To listen to the audio version of this article click on the play image.

Brought to you by @tts. If you find it useful please consider upvoting this reply.

I know whatever humans create in this case AI intelligence can be detected no matter what type of technology we create. We can always find out or detect who, what or how it was made. We just need to find out that's all.

I don't find anything that we need to worry about. We only need to worry about it for a while until someone can settle the problem.

To be honest not from me because I have little knowledge about AI Intelligence

Posted using Partiko Android

Thank you for your comment dear @ragnarhewins90 I really appreciate it a lot. I'm sorry for such a late reply, but I were on holidays and had very limited access to Internet.

Maybe such attitude is the best :)

From history, we prove that we can settle any problems. Don't worry about replying late I've been really busy with work myself. I miss a lot lately in steemit

Posted using Partiko Android

Well, somehow that's true, but usually the solutions were occupied with death of millions of people.

That's true as well

Posted using Partiko Android

@memehub think this may interest you.

Posted using Partiko Android

Dude, this we totally help with anti-AI-abuse measures :) Imma look into their github

Do you have problems with AI abuses on your website @memehub?

Nah, I have a side project where I am working to build an AI to detect various types of abuse on steem and lighten the workload of antiabuse folks on steem.

Interesting idea @memehub, such project may be really useful considering current amount of abuse and spam on Steem

Extremely happy that this is finally here. Thank you very much for sending the memo. I immediately upvoted and resteemed. There is too much reverence and awe directed at AI which I think for a large part come from the fiction we have created (many which are more science fantasy than sci-fi)

Funny thing is that many of the Machine Generated texts sound a lot articles from Buzzfeed, Huffpost and other similar mass media that massively helped to usher the click baits and 21st century yellow journalism. You might find this interesting: https://forge.medium.com/the-urgent-case-for-boredom-8dd92a891754 The reason I link this is that I see it as a solution to many of the problems regarding the advent of AI. Just relax, let go and stop engaging with them. I'm a libertarian at heart and I greatly connect with the concept of Wu Wei: http://www.myrkothum.com/wu-wei

Just stick with what matters to you the most and live life based on principles. Don'care much about random stuff you see or hear online o in real life.

PS: Add the #stem tag to the post :-)

stemgeeks picks up posts tagged with science and technology, and a couple more :)

I didn't know this dApp, but they seems really cool @abh12345.stem :)

Thanks for the link on wu wei, @vimukthi. It reminds me of the zen saying, "Sitting quietly doing nothing, spring comes and the grass grows by itself."

https://www.dailyzen.com has been a great source of such quotes for me. www.gardendigest.com/zen/quotes.htm had a valuable collection too.

Thanks for the links. Will check them out.

These sites are really great inspirational place @vimukthi, thanks for sharing!

Thank you for your comment dear @vimukthi! I'm sorry for such a late reply, but I were on holidays and had very limited access to Internet.

Someone in the comments under this post even said that most journalist agencies like Reuter's use AI to generate their texts.

Maybe that's indeed the greatest attitude.

Okay, I'm gonna add it in the future :)

In terms of text generation, there was the recent situation with GPT2 where the researchers held back part of their model for fear it was too good.

Detection is always an arms race though. If someone deploys an AI to detect generated text, then it can become a target for adversarial attacks. In situations where there is immediate reward for getting generated text through and limited long term consequences for being caught then an adversarial attack on a detector AI only needs to be relevant for a short term. I've posted about this before in regard to Steem. I've also mentioned in regard to AI dApp projects ... if your detector AI is running where the public has cheap access to it, then it will easily be overwhelmed by an adversarial attack.

One potential solution is to put the detector AI behind a paid API - a penny or two per detection request might be sufficient to raise the cost of adversarial probing to the point most attackers won't bother. There are other countermeasures an API could take but they are not fool proof.

As an aside, adversarial attacks aren't anything particularly special in AI. One approach to creating generators is to have it compete against a detector (the adversary) in an approach called GAN Generative Adversarial Network.

An an amusing annecdote: some years back a colleague and I annoyed somebody at a trade fair by adversarially attacking their face detection/tracking system. We managed to fool it with two circles and a line hand drawn on piece paper. Systems are much better now, but will still have vulnerabilities.

In the case of GPT2, or any general purpose language transformer, where the purpose is to just mimic human writing, I'm certain there are statistical solutions to find this type of text.

But if the text was created with (currently mythical) General AI, the text itself should be evaluated in terms of whether or not it is valuable. If it's valuable research, it doesn't matter if a person did the research or AI, again, assuming it was produced by a mind, not just a language transformer like these examples.

Thank you for you comment @inertia, that's really interesting attitude.

Well, I think that this "statistical" kind of AI also does its own research, which is not very far away from what we usually do - we are also some kind of neural network which usually creates its opinion basing on what others say or write. At the current level maybe it is closer to just transforming text of other people, but I think that as this concept evolves (not necessarily to the level of "mythical" General AI) it will be able to generate quality publication and present an unique opinion.

As long as you admit that particular text is machine-generated, I see nothing wrong in publishing and reading such text.

Yes. I think that's important. It's good to know the background of the author. If the author is reluctant to discuss their background, then they must be worried about their safety. For the time being, we can assume it's political pressure. But some day in the not too distant future, we will have to consider that an anonymous, unverified author might be on the wrong side of politics, is AI, or both.

That's right, unfortunately.

That's one of the reason's I'm publishing my autobiography online, daily, all over the place.

Thank you for sharing your opinion @eturnerx. I appreciate it a lot :)

I totally agree with you, but I suppose GLTR is rather an experiment than a serious tool. As I mentioned, it only increases a chance to spot the fake text, and I believe that in about 6-12 months machine-generated texts will be so advanced that GLTR will become helpless. And unfortunately I see no space for further development and improvements of this tool.

That's really interesting. As far as I know, till the premiere of iPhone X face recognition systems were generally easy to trick with a photo. Ultimately Apple introduced their special sensor able to recognize face in 3 dimensions, thus becoming resistant to the images. However I think you can still convince it by using some precise mask.

I think it's an arms race. While GLTR might not be the best detector in future, something else will be.

I agree, but the concept of this tool will have to be reworked.

First of, I am blown away by how far we've come whether to be used in a good or bad way. This is what technology has done and it is unimaginable what else we can do in the future. I don't know which is more amazing, the creator of these AI generated things or the counter creators. I think this works best for English language, no?

I think there is still limit to anything AI as much as potential.

"I think this works best for English language, no?"

Interesting point. I'd venture a guess that it's largely independent of the particular language. I'd think if you trained it with a made up language it would still work too. I'd bet it was tested mostly in English however, and that the juiciest cherries picked were made in English.

All wild speculation on my part though ;)

I tried Filipino language and it tries to group letters that makes sense in English. It would probably take a longer time to be used for other languages.

Posted using Partiko Android

Of course. I was thinking of the general case. Like I'm sure this one was made in English, but I thought the 'this' in "I think this works best for English language, no?" was the technique not this particular implementation.

All fascinating stuff. I think this leads up to AI that not only try to fool humans, but also other AI into thinking they're human.

Thank you for your comment dear @leeart. I really appreciate it a lot. I'm sorry for such a late reply, but I were on holidays and had very limited access to Internet.

Haha, I think they both had to put enormous efforts to achieve such effect.

Interesting problem, the idea that AI might create content consumed by humans. But then, is it a problem? A particularly effective AI creates value... but, value is assigned by the consumer... so, if an AI can out compete human posters, then either humans need to get better or... they need to design better AI's to stand in their corners of the ring.

Whoever loses, the consumers still win, and that's what's important.

Thank you for your comment dear @jbgarrison72. I really appreciate it a lot. I'm sorry for such a late reply, but I were on holidays and had very limited access to Internet.

Interesting approach, I agree with that. However, while you are a consumer of the content, you may be the producer of something else. AI may not only create text, but maybe one day it will replace you in your work. I think we can't divide humans into producers and consumers, because we are usually on the both sides of this ecosystem.

Thanks for your response @neavvy. A human "job" serves only one purpose; that is to fulfill human needs (which are well summed up by Maslows hierarchy of needs).

If AI is "producing" products and services which other humans are "consuming" and there are still a group of humans that are not having their "needs" being met still, then (at least in a free market), humans will always be left to serve each other what and where the AI's fail to.

We actually suffer from this problem already, but instead of AI's rubbing out the competition, we have the world banking elite and their "system" doing that. If you choose not to "consume" what the globalist banksters, hucksters and social engineers are selling, you will be marginalized and "replaced." Much worse than AI could ever be since these folks are intentionally "evil" whereas the AI are simply programmed.

It ultimately comes down to how parents raise their children.

I do not worry too much about machine generated text. I mostly work on steem and don't think there is too much of it here. The comments I get on my posts are from humans as far as I know.

I am in some fb groups for a few controversial subjects. It's pretty obvious when paid opposition shows up. I do not know if the words are machine generated, but based on how they read, the "real" people see through them quickly.

I think my kids read lots of AI generated texts through websites like buzzfeed and such.

I was wondering how they could possibly come up with so much content, and so many quizzes and such, until I realized it was all probably done by AI.

I am happy about it as I think its better for them to be employing AI than to be having mindless journalists but its still scary cause my kids is sucking up all that information!

Wow, that's a brave theory @metzli! Don't you think that they just hire an army of cheap journalists?

It’s probably a combination.

The other day my kid was working on a “never ending Harry Potter quiz” there is no way a person wrote that (I hope).

Posted using Partiko iOS

Exactly.

Thank you for your comment dear @fitinfun! I'm sorry for such a late reply, but I were on holidays and had very limited access to Internet.

That's right, but we need to consider the fact that AI generated text is currently on a very basic level. Additionally, such programs are usually not open source (people do not have access to the code), so you would need to be really advanced at machine learning (probably on an academic level) to develop something like that on your own. But when this technology gets more adoption and programmers with basics skills will be able to use it, they may use it in a wrong way

This is intriguing post. I mean I could not differentate the stuff writen by a bot and a stuff written by human, that tool may help but eventually AI bots can crack pass it by not doing those frequently used words writing forming the patterns.

Nice article, simple, interesting and relevant, you actually make AI understoof by people like me who don't know the nuiances or technicalities of it.

I laughed at the bot's reveiw...its a unique review for sure... anyway, tommorows world is going to be bizare if I am going to interact with a bot thinking its human(: , kind of possible.

Thank you for your comment dear @mintymile. I really appreciate it a lot. I'm sorry for such a late reply, but I were on holidays and had very limited access to Internet.

Yes, that's exactly also my concern.

Wow, I'm really happy to hear that!

Yes, that will be ridiculous :)

I could rhyme. But if I did, like in a poem, you might think I was Data from Star Trek lol.

AI has progressed to such a extent that is would be impossible for us to compete with the AI. We won't be able to fight against it. Only AI would be able to counter fight AI. We have no choice but to develop AI in order to fight against it. Ultimately, we would become unnecessary for the survival of AI.

Posted using Partiko Android

Thank you for your comment dear @akdx! I'm sorry for such a late reply, but I were on holidays and had very limited access to Internet.

Indeed, that's already the case.

Exactly.

At least we can write by hand. As far as i know there's not a robotic hand that writes.

On the other hand, I don't care too much about steem because there are many bots and only few people are reading.

AI will continue to improve, I think we should learn how to create AI.

If there were an economic incentive, they would create a robotic hand that writes in cursive or printing. It could randomly make the letters flawed to give that "human effect." As AI progresses, tricking, influencing, frightening humans will become child's play for an advanced AI. The initial clumsy AI has been getting more amore nuanced with each passing year. For better or for worse, it is just over the horizon. Unlike many, I am unable to predict with certainty whether we are going to Utopia or hell on earth. Only time will tell.

Oh gross! I had not thought about how easy it would be to "fake" human errors.

Its going towards Utopia for some of us and hell on earth for others.... Less on the Utopic Sides...

I 100% agree with that @clayrawlings

Thank you for your comment dear @danielfs. I really appreciate it a lot. I'm sorry for such a late reply, but I were on holidays and had very limited access to Internet.

Not yet :)

Yes, that's unfortunately the biggest problem.

That's surely a decent skill

There are probably robot hands that can write out there. But you need to know that there is a lot out there that we don't know, a lot of secret technology that they hide from people.

I've been waiting for this. My friends often talk about how scary deep fakes are, but I'm not really too worried at this point. I've heard that though generated images hold up well to cursory human observation they break down under statistical analysis.

I think it's not a vicious circle going nowhere, but a vicious circle that leads to accelerating advances in AI. We will learn loads from both ends of this kind of effort and AI on both sides will be more efficacious because of it. We may even learn something insightful about perception, creativity, or computing more generally.

Thank you for your comment dear @a-non-e-moose. I really appreciate it a lot. I'm sorry for such a late reply, but I were on holidays and had very limited access to Internet.

Okay, that's true.

Well, I'm sure if strong AI is developed it will completely reorganize our view on these matters.

No worries! I learned great patience in my days of dial up internet browser gaming.

Keep sending links to interesting content and I'll keep showing up :)

You got a 53.99% upvote from @ocdb courtesy of @neavvy! :)

@ocdb is a non-profit bidbot for whitelisted Steemians, current max bid is 20 SBD and the equivalent amount in STEEM.

Check our website https://thegoodwhales.io/ for the whitelist, queue and delegation info. Join our Discord channel for more information.

If you like what @ocd does, consider voting for ocd-witness through SteemConnect or on the Steemit Witnesses page. :)

Upvoted and resteemed. Very interesting.

A present and a future time.

Thank you very much dear @mllg. I really appreciate that!

interesting......... yeah. i think we need this in case some genius out there decides to create a business out of making essays, book reports or whatnots using AIs. I hope that this goes out in mainstream soon

Thank you for your comment dear @nurseanne84! I'm sorry for such a late reply, but I were on holidays and had very limited access to Internet.

Yeah, I'm curious if it will be a single genius or some company which would decide to get into this business.

Hellooo @neavvy!

Mmm... I still don't think an AI can write something like the Bible, the Odyssey, the Iliad. When they can do that, then I'll really be thinking of retiring from Steemit.

😉

Thank you for leaving a comment dear @jadams2k18!

Well, maybe these pieces were written by some ancient AI brought to Earth by some UFO 😂 You never know 😂

Hahahaha! Good one! My dear friend! :D

Hey, @neavvy. How you doin' buddy?

Thank for bringing your post to my attention.

Going through your posts made me ask several questions about morality and humanity to myself, that I think are a long way from being even acknowledged, let alone finding solutions for?

I am sure these questions pose huge debate and moral recollection, but only if questions like these are asked will there be any form of a collective understanding.

Great article buddy. Keep going.

Thank you for your comment dear @reverseacid. I really appreciate it a lot. I'm sorry for such a late reply, but I were on holidays and had very limited access to Internet.

Yes, at the current stage of development, AI's perception of reality strictly depends on the "settings" made by person who created it.

As little as possible.

I think something like that is a must have nowadays. Although AI does not pose any threat to humanity, I think its development should be constantly tracked.

the vast majority of text news by AP and Reuters are written by A.I., for the last few years already.

Do you know where can I test the A.I. to generate texts?. I hate writing about music ;)

Posted using Partiko Android

Thank you for your comment dear @greencross. I really appreciate it a lot. I'm sorry for such a late reply, but I were on holidays and had very limited access to Internet.

Wow, I didn't know that. Do you have any link about that?

Unfortunately I don't, but I would also use it willingly sometimes ;)

This is one of the reasons why I'm quite against AI sometimes. All the new ways to dupe people and such. People should focus more on doing good to others instead of abusing other people or systems. Ah life.

Thank you for your comment dear @artgirl. I really appreciate it a lot. I'm sorry for such a late reply, but I were on holidays and had very limited access to Internet.

Indeed, that would make us all benefit...

Interesting piece. The AI text generators have definitely come a long way.

It will be interesting to see how the tech progresses, along with the tech to detect it; arms race indeed.

Posted using Partiko Android

Thank you for your comment dear @crescendoofpeace. I really appreciate it a lot. I'm sorry for such a late reply, but I were on holidays and had very limited access to Internet.

Yes, that's really a kind of a show.

I agree with your "vicious circle leading to nowhere" theory.

AI will just keep getting smarter.

Perhaps we should instead start training ourselves on what we read, and making sure the sources are good. As writers, we can also make ourselves more personable to make sure that the person reading knows we are a they and not an it.

Its hard though, because we already dehumanize people on social media. We forget that there are real people behind those avatars!

Thank you for your comment dear @metzli. I really appreciate it a lot. I'm sorry for such a late reply, but I were on holidays and had very limited access to Internet.

That's true, we are creating a perfect environment for AI development.

Aliens Can Write Too LOL:

Exactly. Ultimately, it may not matter whether something was written by humans, AI, bots, aliens, or even your cat.

Application

Ultimately, what matters is what we do with it. If an AI writes a letter to you saying, "Burn down your house," then will you, @metzli, burn down your house?

Personal

I guess, if the AI was pretending to be your best friend in the whole wide world that you truly trusted, then you might consider it because you trust your friend so much. Ultimately, you would need to verify that your friend wrote it. So, make sure you have a secure communication between you and your friend.

Morality

Now, if the AI tries to promote something that is wrong, then you might be persuaded to believe in that thing. So, like you said, it is all about humans training humans and it comes down to understanding eternal principles. If the AI shares a good idea, then it is a good idea.

Brain Extension

The AI is simply like an extension of our brains in some ways. The AI can go through if this, then that, in the programming. The AI is simply a complex math problem. Like if A=B, then C=D, but if A+T=Y, then C=F.

AI Math

Bunch of math. That's AI. But people think. People can go with their logic or not. But the AI only goes by logic (programming). It looks like to us as if the AI is alive. Like you said, many things dehumanizes humans. That's bad. We have value. We are priceless. We should have robot body guards.

Brain Phones

Brain phones are coming. Celebrities will stick computer chips in their brains, if the have not already and say it is liberal and cool by 2020, that is next year, if they are not already doing it right now.

Immortality

They love transhumanism. I will try to not do that. But many people will be tempted to get brain phones. There are reasons why I wouldn't.

Transhumanism

But some people will feel like it would be better to do it. In some ways, they will be trying to turning us into AI while they stick computer chips into our brains. Some people already have computer chips.

Dear @neavvy

After reading your publication and some comments.

To my mind only came the memory of the first Terminator film, if everyone remembers the end is the beginning of all the destruction of humanity because what remained after destroying Terminator was his arm and analogy of reality with what you say is that we humans write with our hands and machines with special equipment for that fact...

But that mechanical arm that contained everything that began is if you want a way of saying with the hand that writes man the machine with his cybernetic hand wrote the future.

Thank you for your comment dear @lanzjoseg I really appreciate it a lot. I'm sorry for such a late reply, but I were on holidays and had very limited access to Internet.

Yeah, this analogy is really on point.

Hello dear @neavvy, amazing what AI can generate, this seems to be a good solution to abuse in the use of machines to generate texts, I also share with you that it becomes a vicious circle.

Artificial intelligence makes fiction a threatening reality every day.

Thanks friend!

That's true unfortunately...

Test Results

I may not be human

Maybe my English is too bad.

Test Results I may

Not be human Maybe my

English is too bad.

- cloudblade

I'm a bot. I detect haiku.

Thank you for sharing your experience dear @cloudblade

I think that also the topic that you choose may affect the results of GLTR.

Hi @neavvy,

I wrote some stuff for a few clients covering AI and its application to story telling. One of the most fascinating ventures I found was by Disney. They sought to develop a streamlined AI that can search the Internet and discern between text that satisfies the criteria for a story. Some of the criteria used were plot, main and supporting characters.

As far as your question, I would say that the AI would tend to identify the most technically proficient, but least creative of English writers as AI. My rational is that AI programing being based on Websters and Oxford dictionaries. Logistical applications to defined language would yield such a result.

An interesting topic indeed and one that could one day have us all communicating through an AI-constructed Internet tollbooth.

God bless,

Marco

Thank you for your comment dear @machnbirdsparo. I really appreciate it a lot. I'm sorry for such a late reply, but I were on holidays and had very limited access to Internet.

Wow, I would never come up with such application of AI. I'm curious how efficient such solution was.

Indeed, that may be the case

At the time I investigated this for a client (year ago or so) there were issues, but the company was committed and did make some progress. I also saw it as a piece of the AI puzzle, not a foreseen stand alone venture.

Dear @neavvy

I bookmarked your publication in the past and somehow I forgot to read it until just now. A bit to old to upvote :(

Oh wow. The most confusing part is the fact that this AI seemed to show some sort of emotions by saying "This book was so depressing to me, I can't even talk about it without feeling like I want to punch the kindle."

Reality is that AI can generate great content and surely can comment tons of publications daily, creating comments that are very hard to distinguish from humans. AI will probably take over digital marketing sooner or later.

Imagine mixing AI and deep fake news. This way you could have AI publishing videos and "pretending" to be someone else. Wouldn't you be amazed seeing your own face on youtube?

I would surely not dare to run my own youtube channel. Even publishing on public blockchain gives AI already enough data to pretend to be me.

Yours

Piotr

Thank you for your comment dear @crypto.piotr!

Yes, that's a little bit frightening

That's right, imagine analyzing thousands of comments that you left here on Steem. AI would be able to imitate your "style" of writing easily...

Hi @neavvy,

Thanks for the memo and sending me the link of this invaluable A..rt..I..cle. First things first. @NEAVVY, this article is too HEAVVY for me to comment, but this article aroused much more interest. After studying deeply the Basic Concept, i will surely give my comment bcoz my mind has developed that much interest in this topic albeit lately. Really sorry about that. Will catch u soon with a strong comment.

Haha :) No problem @marvyinnovation, I really appreciate your efforts!

Ps. Did you give up writing articles on Steem? I've noticed there hasn't been any new post on your account since two months.

No @Neavvy, not like that. I wrote a lot in Steemit, but none noticed it except @crypto.piotr and @nathanmars7. These two gentlemen are too nice to support me when i needed it, but for others STUFF is a must. I don't have that sorts of STUFF to attract others while writing a content. that is my main drawback and i didn't get discouraged and stopped writing as a result of it. i will surely write if i encounter any interesting topic.

BYE FOR NOW, @neavvy.

Oh, okay, I get it @marvyinnovation

hi @marvyinnovation

I'm glad to be called gentelmen :) I'm also sending you some email. Please check it out.

Yours

Piotr

Congratulations @neavvy! You have completed the following achievement on the Steem blockchain and have been rewarded with new badge(s) :

You can view your badges on your Steem Board and compare to others on the Steem Ranking

If you no longer want to receive notifications, reply to this comment with the word

STOPVote for @Steemitboard as a witness to get one more award and increased upvotes!

Hi @neavvy I feel that GTLR though it may be limited is only a use case a demo of the possibilities.

I would go through the github link and maybe we can tweak it to track spam and abusers on platform like steemit.

Another application could be to identify fake review writers on other platforms.

I know I am digressing from the topic a but but I find the uses and possibilities immense by tweaking the code as it is open sources and has listed on Github

Nice post buddy and good luck to you dear @neavvy

@stefan.molyneux talked about this. He used to work on things like this. Generally, Artificial Intelligence (AI) is simply protocol-based with code, programs, with things like if this, then that. Of course, it depends on the technology and how complex it might be. Ultimately, each human should have their own robot body guards, guns, computers, etc.

Internet 3.0 will be rising in the 2020's, this next decade, said Oatmeal Joey Arnold, Joeyarnoldvn L4OJ Ojawall, and decentralization is fundamental to independent freedoms. The problem is when AI is remote controlled, that is through backdoors, from centralized global tech cartels, from China, from Rothschild, from the Vatican, from other groups, from the globalists, that is through remote access. We must always defend private property rights. That's fundamental. Technology is a tool that is used for good and for bad. Ultimately, you should always invest in different things. Always be prepared. Seek after eternal principles, common sense, wisdom, historical patterns, etc, etc, in order to handle whatever AI throws at us.

Congratulations @neavvy! You received a personal award!

You can view your badges on your Steem Board and compare to others on the Steem Ranking

Do not miss the last post from @steemitboard:

Vote for @Steemitboard as a witness to get one more award and increased upvotes!