DeepFake FaceSwap AMD Windows 10 Pro How To Guide with Justin "Teletubbies" Sun Teaser!

Some time ago, tweeted my interest in creating a faceswap of Justin Sun with the Teletubbies sun baby.

Well friends... The day is now upon us!🌞

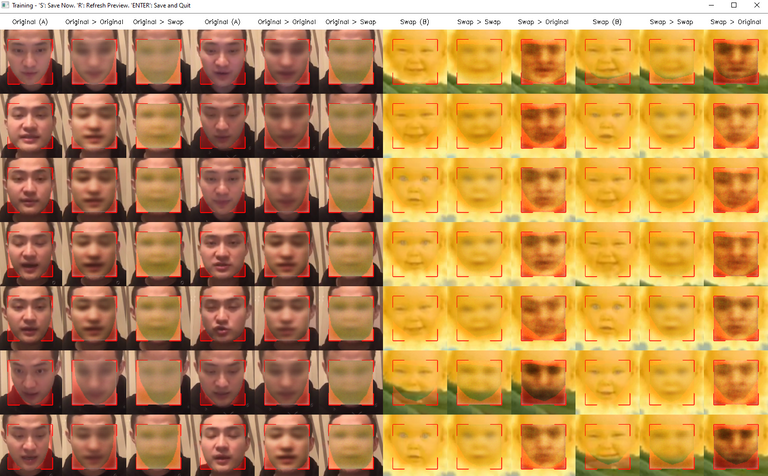

A preview of the face conversion models in progress.

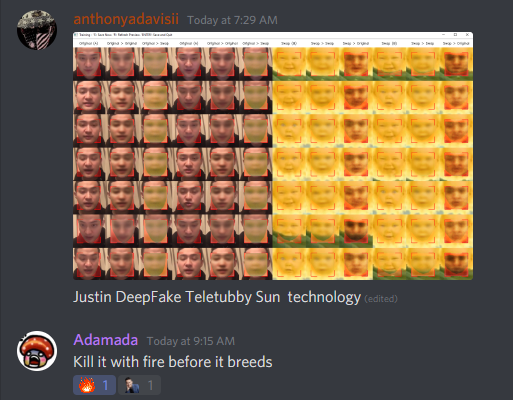

@adamada thinks my work is fire... or should be on fire. Probably both! :P

If my abominable deepfake creations had sentience... Created from Source.

So, what is a deepfake?

Deepfakes (a portmanteau of "deep learning" and "fake") are synthetic media in which a person in an existing image or video is replaced with someone else's likeness. While the act of faking content is not new, deepfakes leverage powerful techniques from machine learning and artificial intelligence to manipulate or generate visual and audio content with a high potential to deceive. The main machine learning methods used to create deepfakes are based on deep learning and involve training generative neural network architectures, such as autoencoders or generative adversarial networks (GANs).

For an example of deepfake technology at work on Hive, I refer you to my friend @battleaxe's post in which @inertia used an app to swap her face with WonderWoman. I'm just including a still so you'll have to check her post out for the gif in all it's glory.

https://hive.blog/hive/@battleaxe/inertia-deep-faked-me-and-i-liked-it

Don't forget to check her blog out while your at it!

Installing FaceSwap using venv on Windows 10 -AMD GPU

I will be documenting the steps that I used to install FaceSwap on Windows 10 Pro Version 10.0.19041 Build 19041. I fumbled around with this a bit not fully understanding how venv worked in Windows but eventually got the hang of it. Things should run much more smoothly for you but, if they do not, feel free to reach out and will try to help you troubleshoot. I see a world of comedic possibilities with Ned and Justin deepfakes.

You may or may not require the following. They had been installed in my initial attempt but decided to keep them.

CMAKE

Link

Visual Studio with C++ compiler

Link

1. First, you must install Python3.7...

Note: You may / may not need to select the long path option. If you are in doubt, go ahead and enable the option.

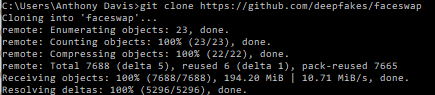

2. Clone my faceswap repository fork using the following:

git clone https://github.com/anthonyadavisii/faceswap

I made a couple adjustments to the requirements after running into an error and to simplify.

3. cd faceswap

4. Create the virtual environment with the following:

py -3.7 -m venv faceswap_env

5. cd faceswap_env\Scripts

6. Activate the virtualenv:

activate

Your command prompt should be prepended by (faceswap_env)

7. Copy requirements_amd.txt and _requirements_base.txt into the faceswap_env\Scripts directory and then issue the following to install requirements into your virtualenv:

pip install -r requirements_amd.txt

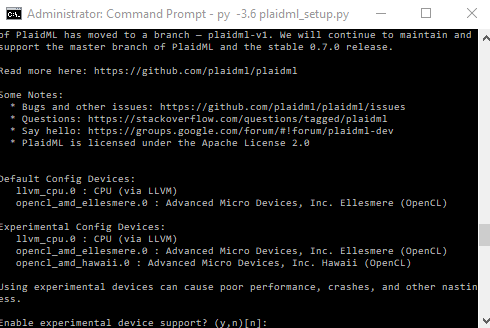

8. Execute the plaidml_setup python script.

plaidml_setup

9. Select 'y' to enable experimental device support.

10. Select the number that matches the GPU architecture of the desired card. In my case, I am choosing opencl_amd_hawaii. Save setting with 'y'.

11. Issue the following command to set the KERAS_BACKEND environmental variable.

setx KERAS_BACKEND plaidml.keras.backend

12. Deactivate your virtual env by executing the following in the faceswap_env\Scripts directory:

deactivate

Now, you have a virtualenv ready to activate for some shenanigans!

This concludes part 1 of my tutorial. In the next one, I will show you how to use faceswap to extract frames, faces, train your model, and then we will be performing the conversion.

Haha the deep fakes are getting out of hand.

You either die fighting the madness or live long enough to be part of the madness.

Thanks for your contribution to the STEMsocial community. Feel free to join us on discord to get to know the rest of us!

Please consider supporting our funding proposal, approving our witness (@stem.witness) or delegating to the @stemsocial account (for some ROI).

Thanks for using the STEMsocial app and including @stemsocial as a beneficiary, which give you stronger support.