AI Robots: An AI Robot That Rebels Against The User!

Scientists have developed an intelligent AI robot that first guesses then bugs users by rebelling against their plans.

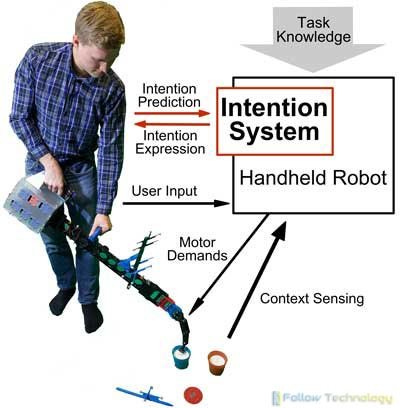

In a brand-new development in human-robot research study, computer scientists at the University of Bristol have made a handheld artificial intelligent robot that initially guesses then irritates users by rebelling against their strategies, therefore showing an understanding of human objective.

In a developing technological world, cooperation between makers and humans is an essential element of automation. This brand-new research study reveals aggravating people purposefully as part of the process of establishing robots that much better team up with users.

The group at Bristol has developed wise, handheld robots that end up jobs in cooperation with the user. Contrary to traditional power tools, that know nothing about the tasks they carry out and are completely under the control of users, the handheld robot keeps understanding about the task and can help through fine-tuned motion, assistance, and decisions about job series.

The latest research study in this area by Ph.D. applicant Janis Stolzenwald and Professor Walterio Mayol-Cuevas, from the University of Bristol's Department of Computer technology, browses using intelligent tools that can affect their decisions in response to the intention of users.

This research is an innovative and stimulating twist on human-robot research study as it targets to predict first what users want and after that go against these concepts.

Professor Mayol-Cuevas said: "If you are irritated with a device that is meant to help you, this is easier to detect and measure than the often vague signals of human-robot cooperation. If the user is annoyed when we inform the robot to break their strategies, we understand the robot understood what they wished to do."

"Just as short-term forecasts of each other's actions are essential to successful human team effort, our research study shows including this capability in cooperative robotic systems is crucial to effective human-machine cooperation."

For the experiment, scientists utilized a prototype that can track the user's eye gaze and develop short-term forecasts about planned actions through machine learning. This knowledge is then used as a base for the robot's choices such as where to move later.

The Bristol team coached the AI robot in the research study utilizing a suite of over 900 training examples from a choice and place task carried out by individuals.

Central to this research is the examination of the intention-prediction model. The scientists attempted the robot for 2 cases: rebellion and obedience. The robot was programmed to go by or disobey the predicted objective of the user. Knowing the user's objectives offered the robot the power to break their decisions. The difference in disappointment reactions between the two situations acted as proof for the precision of the robot's predictions, hence backing the intention-prediction model.

Janis Stolzenwald, a Ph.D. trainee, funded by the German Academic Scholarship Structure and the UK's EPSRC, brought the user experiments and acknowledged brand-new difficulties for the future. He said: "We found that the intention model is more practical when the gaze information is joined with task knowledge. It raises a brand-new research concern: how can the robot recover this knowledge? We can imagine learning through presentation or including another human in the job."

In the foundation for this new challenge, the researchers are currently exploring shared control, collaboration, and new applications within their research studies about remote association through the portable robot. A maintenance job serves as a user experiment, where a portable robot user acquires assistance through a specialist who somewhat controls the robot.

Previous Ph.D. trainee, Austin Gregg-Smith developed and designed the handheld robot which was utilized for the research study. The research study is readily available as an open-source style through the scientist's website at www.followtechnology.pw

thanks as usual!! you are awesome

Congratulations @amanda46536! You have completed the following achievement on the Hive blockchain and have been rewarded with new badge(s) :

You can view your badges on your board and compare yourself to others in the Ranking

If you no longer want to receive notifications, reply to this comment with the word

STOPDo not miss the last post from @hivebuzz:

thanks

You're welcome @amanda46536.

BTW, we need your help. May we ask you to support our proposal so our team can continue its work?

You can do it on Peakd, ecency, Hive.blog or using HiveSigner

https://peakd.com/me/proposals/248

Thank you. 🌹🌹🌹

Sure!

done :)

Thank you. Looking forward to getting your support for our proposal 🙂⏳

All you need to do is to click on the "support" button on this page: https://peakd.com/proposals/248. It won't cost you anything!

already done :)

btw, is there any upvote service or hive like there used to be on steem?

Sorry to say but I do not see your approval for the proposal. Can you check it again?

PS: Hive is different from Steem and bidbots are gone.