Don't blame me, AI did it - GauGAN to Gigapixel

Artificial Intelligence

After my contest entry I continue rambling about AI used in enhancing and generating new images.

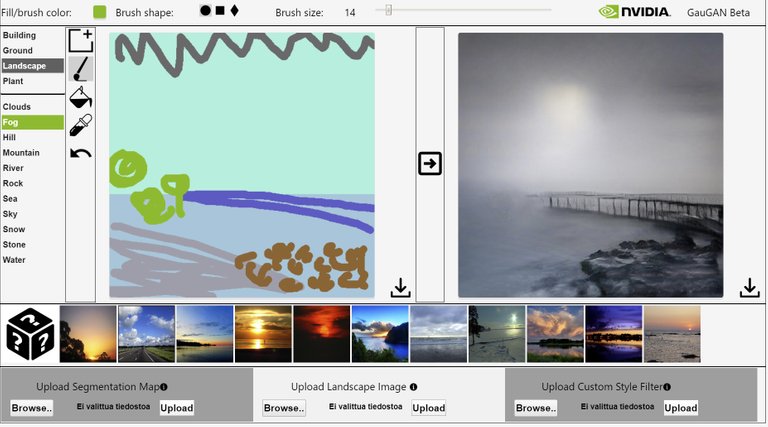

Fist things first, here's my contest entry for Gaugan contest.

There's a wonderful art making playground and time consumer out there: http://nvidia-research-mingyuliu.com/gaugan. You draw few lines, choose a theme and there you have it, art generated with AI.

The NVIDIA GauGAN beta is based on NVIDIA's CVPR 2019 paper on Semantic Image Synthesis with Spatially-Adaptive Normalization or SPADE.

The semantic segmentation feature is powered by PyTorch deeplabv2 under MIT licesne.

Go check it out.

This is the picture that I drew which, shortly said, AI used to make the image above.

I know! Cool, eh!

Thank you @drakernoise for bringing this to my attention. I've seen you participate this contest few times, but haven't gotten around to doing that too. Until now. And especially thank you @steemean for making this contest with the help of your father and other sweet and kind steem folk here.

I'm sure, if you are interested what the GauGAN is made of, you already found the link to the code. If you missed it, here it is: https://github.com/NVlabs/SPADE. And from there to NVIDIA's AI playground: https://www.nvidia.com/en-us/research/ai-playground.

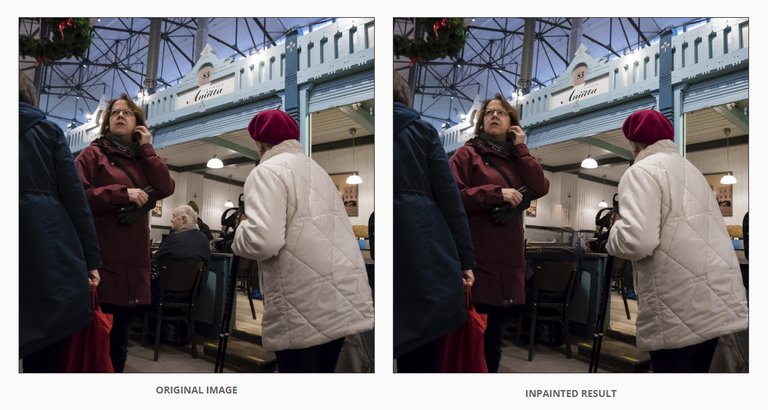

I didn't get much results by uploading a mugshot of one of my cats to Ganimal, but I did play around with the image inpainting and gave it few tasks to solve.

Not perfect, but I have to say, that background would be tough if you would have to do that by hand, stroke by stroke.

|  |

|---|

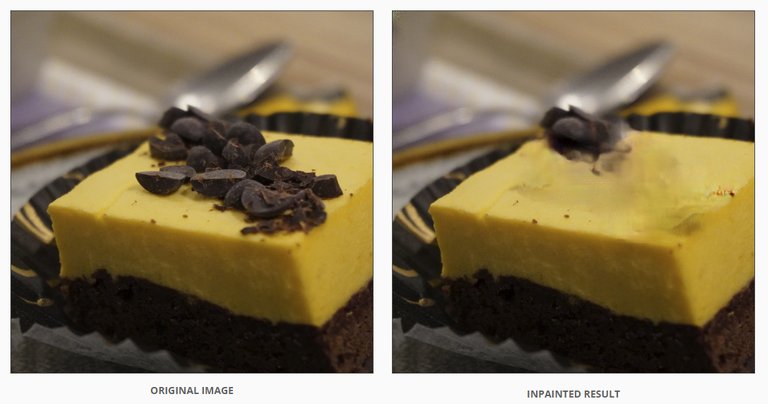

What is going on there with the ground on the pigeon photo? I thought this one would be easy. I definitely would have gotten a better result by doing it myself. And the cake, that surely wasn't a piece of cake. But then again, the code did ask for a landscape or a portrait photo. Let's try those then.

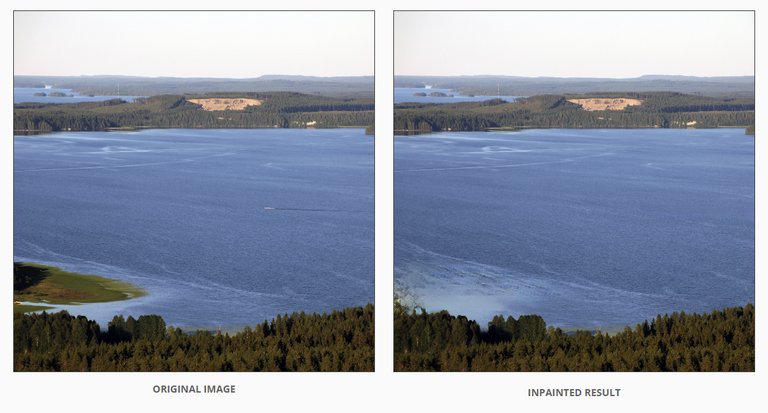

|  |

|---|

I don't like that garbage can so let's get rid of that... And the result... If I squint my eyes and look the photo far away, I can imagine the inpainted result is good.

I do like boats in a lake, but I wanted to give something really easy to manipulate. And that went okayish. Now the vanishing land on the other hand, I'm seeing same kind of pattern than what I saw in GauGAN. Too much repetition. I guess it isn't easy to learn what kind of repetition in a manipulated image is good, and what isn't. But then again the more images people download there and give feedback of the result, the better it comes. Or should. If the code is any good. And I'm sure there are better AI's out there too.

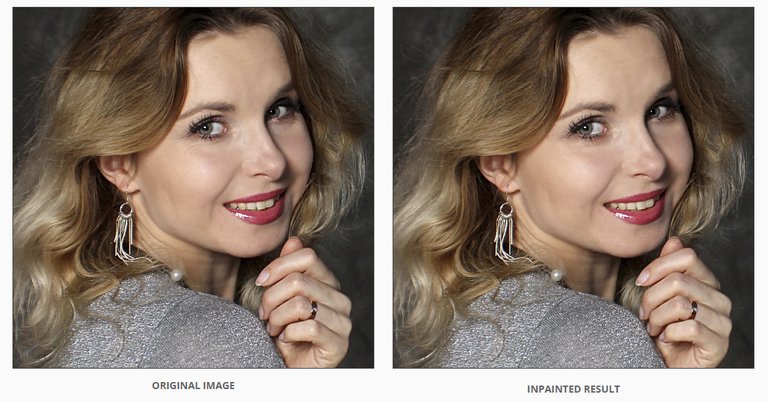

Last two photos. Wrinkles are beautiful but let's give this face smoothing thing a change.

Not bad, not bad. I would normally never do this to the models I photograph. Anna here is far more beautiful with the smile wrinkles near her eyes than without them. But if I did want to make them go away, this would have been so easy to do in Photoshop.

A slightly younger captain with couple extra and weird lines.

I'm done with playing with this.

The thing is, copying is easy. It's the inventing part that's hard. Inventing stuff that should be there if something else is taken away. For that you need experience. Or large piles of data from where to look how things should look like.

I do think this, although I did say few times that I could do better with Photoshop that has only my brain to rely on, is a pretty neat thing. This and all the other programs that do not only copy what they are told to copy, but learn to invent new things. No more to obvious repetition but pixels in an image that originally weren't there and still look believable.

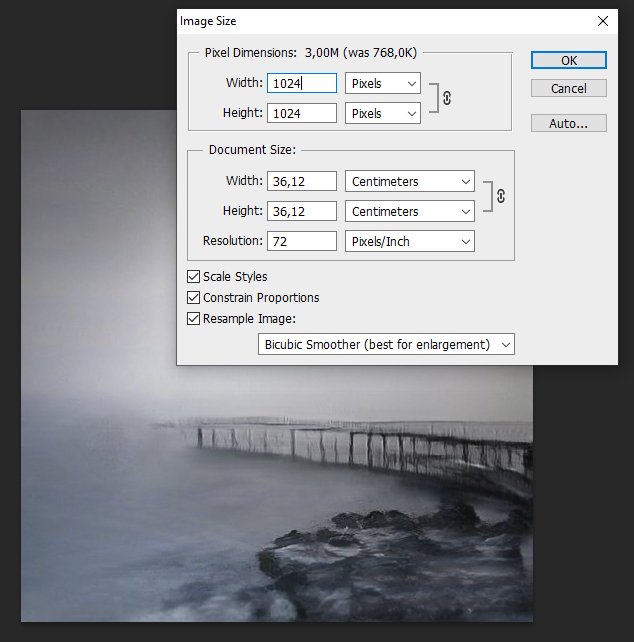

Let's go back to the first image. The GauGAN art. I wanted to make it larger. Here's what I got with Photoshop.

| Original | Settings | Larger |

|---|---|---|

|  |  |

As you can see, no chance of making this picture look nothing more than just a larger version of the same pixels, blurry. I could do something about it by sharpening / blurring it a bit or applying some artistic filters, but that is not the idea in this case. So I won't.

Here's where I wen't with it:

https://deep-image.ai

And the result was:

I will admit, this is a tough image to play with as it doesn't have that much information in it. But think of this as a CSI (the tv-series) challenge. Or some other crime series where they enlarge a single frame from a security cam footage, a tiny reflection of the murderer from the hubcap of some car. In a parking garage. Under ground. In poor lighting conditions. Impossible, but not in the movies. And perhaps not in the near future.

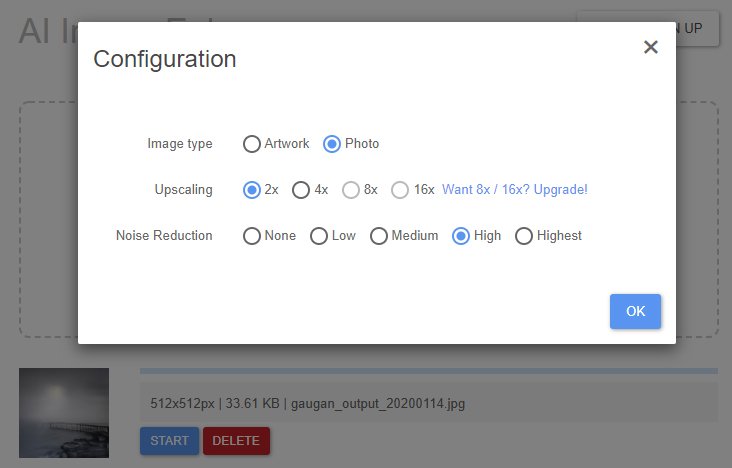

Second option:

https://bigjpg.com

Configuration: (Let's imagine this is just a crappy photo.)

Result:

I didn't try any of those online versions where you have to subscribe. I hate subscribing to places which I know I most probably am not going to use them ever again. But with Topaz Labs A.I. Gigapixel (30 day trial), I had to make an exception. It's a desktop version and I have really high hopes for it.

--> https://www.provideocoalition.com/a-i-gigapixel-enlarge-images-using-artificial-intelligence/

--> http://www.northlight-images.co.uk/topaz-ai-gigapixel-review/

https://topazlabs.com/gigapixel-ai

The user interface is simple, which I like.

There are some issues, but not bad at all if this was a photo and by enlarging the photo we wanted to things be clearer, not just blurrier. Or actually, one has to know what to keep blurry and what to sharpen. Fog looks awful when sharpened. But in this version I especially like the rocks. In my mind in the original image, the pier is a wooden pier that just is a bit rotten, but it seems that this one is made out of sticks and twigs too, not only wooden poles. And that's okay, if it's true, it's true. Who am I to question the ability of a future to be CSI image enhancer.

Auto and manual options. Not too many choices. And that's okay, as long as the choices that are available, do their job.

Do you remember my crappy camera comparison posts? Oh you don't, well that's okay, there actually isn't that much to remember. Anyway, I thought we could test Gigapixel with one of my Sony Cyber-shot DSC-P32 (3.2 MP) photos.

| Original, Sony Cyber-shot DSC-P32, 1.48 MP, 1536 x 2048 px. Full size here. | After Topaz Labs Gigapixel, 62.9 MP, 6144 x 8192 px. Full size here. |

|---|---|

|

Now, can you tell me the letters and the numbers on those registration plates of the cars on the right bottom corner? Either one of them so we can catch the thieves. Or was it murderers that we are searching. I'll help you a bit. In Finland we have three letters a dash and three numbers. On some special plates there can be less, so you can better spell TIT-5 or AUT-0 or something that in your mind is really funny, but usually, three letters and three numbers. In that order. Did we catch the criminal?

I think we are not quite there yet. If ever will be.

https://img-9gag-fun.9cache.com/photo/2078832_700bwp.webp

But what we do have is several programs that recognize what a brick wall, hair, skin or a dog looks like. Facial recognition, text, language, sounds. Even a Freddiemeter!

https://experiments.withgoogle.com/collection/ai

Although enhancing a photo and creating something to the photo that someone assumes there is or should be, are a bit different things. Working with one file, the only existing material always has it's restrictions. But if we do not mind about the fact that in the photo which we have enhanced, all the leaves in a tree might not have been on the original tree when the photo was taken, then we might think that it's more important for the texture and the image to look natural than 100% accurate.

So what I do think in the future we have, because of the vast amount of data available and the ability to combine that and because of AI, is for instance when enlarging a photo, we increase the probability of how the surroundings have looked like at the time when the photo was taken. Let me explain that a bit better. Or worse, but more. All the information that we have, makes guessing a bit easier. If I didn't manage to take an accurate photo of certain building, someone else has and with that information, I can enhance my photo. And it's even more now (or in the future) that we have a code that can combine things faster than ever. And learn when doing that.

Take a look at the larger photo above and the bricks on that building. (You have to click the larger image link so you see what I mean.) The brick wall, in my opinion, looks a bit like old shingle roof. Like a cartoon where the lines are enhanced as the texture is spotty but smooth. Now I know this particular brick wall is nothing like that when looked at close. Furthermore it's an old building so it is really unique in every way. If you have certain amount of pixels, that's what you have and you can only guess what should be there and how it should look like.

But the thing is, there are plenty of photos of that particular building in Verkatehtaankatu 2 Tampere through the many years of it's existence. So there's plenty of material to combine or to learn from. With the assumption that I would be using a program that would do that, recognize the surroundings and access a vast database to make my sorry ass photo better. So I could zoom my photo all the way to some bullet hole on the wall from the civil war even though my camera didn't catch that. Assuming that someone else would have taken a close up of that bullet hole. And if there were no other photos of that building, there still are plenty of examples of brick walls. How a brick wall built in certain century in that particular place, should look like.

But that's a slippery slope. Are your photos still yours if you enhance and enlarge them enough using available data that other people have provided and an AI adds to your photo for you? In the future, your photo app won't only have the option to enhance your photos to look better, the app will ask you:

"Discard rain and add a perfect sunrise?"

"Change all the cars to horse carriages?"

"Would you like to see how your photo would have looked like two hours before you were there, on the corner of the street, taking this photo?"

And then there's the catching the criminals with photos that are made out of compromised and altered data. Seeing what isn't actually there. Or perhaps with photos and video footage that hasn't actually yet happened in the real life. Like in Minority Report

The possibilities are endless with accessing enough data. And the possibilities are beyond endless when feeding incorrect data. Which brings me back to NVIDIAs code and especially GANIMAL. For some reason I was unable to get any results from my cats photo. I suppose cats won't do instead of a dog. Unfortunately I don't have a proper head shot of a dog. I guess Ganimal can't be fooled with a cats face. Or it is broken. I just wanted to see my cats grumpy face with a dog. Or a tiger.

Here are all the GauGAN images side by side for you to compare. And I'll throw in a bigger than 2 times version too. Made with Topaz Labs Gigapixel.

| Photoshop, 2x larger | deep-image.ai, 2x larger | bigjpg.com, 2x larger | Topaz Labs Gigapixel, 2x larger. Full size here. | Topaz Labs Gigapixel, 6x larger. Full size here. |

|---|---|---|---|---|

|  |  |

Now that you know how this image was made, who's the artist? I? Nvidia? Topaz Labs? Or is this art at all?

It's there. The tweet.

https://twitter.com/insaneworksfi/status/1218592534798196736

Sorry, out of BEER, please retry later...

👌

Wow os @insaneworks when you make things you do MAKE them omg!

I’m impressed on your amazing post, you really digged on the app and the others at a point totally unknown to me, so many thanks for sharing all that knowledge with us!

Your entry is beautiful and incredible!

Did you know that once your drawing is done it may change depending on the image selected on the image strip?

You should link your post on a comment on @steemean related post :

https://steemit.com/art/@steemean/the-gaugan-ia-contest-on-steemit-week-13-or-o-concurso-gaugan-ia-no-steemit-semana-13

Best wishes for the contest, you put the level too high, Inwill try and beat you tomorrow 😂

Have a great weekend

Hugs’n love over to you ❤️

Better do things properly or not do them at all.

I clicked on the other options too but this was something that satisfied my eye and brain most. Can't wait to see your artwork tomorrow. You better be extra awesome then. ;)

Thanks for reminding me. I'll link this post there right now.

Hugs!

/ᐠ-ᆽ-ᐟ\

This is definitely a work of art @insaneworks! So beautifully mysterious! You are totally insane! Now, I am shy to work on mine :D

And I like Minority Report!

:) Thanks!

Making it took "few" trials and undoes.

I'm gonna go right away see what you got!

hei do you know this ai. Deep Dream

https://deepdreamgenerator.com/

Didn't know about that one. Very interesting. :)