AI Forensics

What you do is you take a series of pictures of a room depicting a crime scene. You could also use high quality video. But the point is to capture the state of the room, as it exists right now.

Then, you give this data to an AI algorithm. This AI is trained to look at the objects in the room and figure out how they got there. Maybe there's a book on the shelf. Maybe there's a plant that has been tipped over. Maybe there's a cabinet with the doors half open.

The AI has resources to do billions of permutations on the objects. Basically, it has the time to look at how the objects likely moved to their current position, complete with a ridiculous number of possibilities.

For example, it imagines that the book got on the shelf because three weeks ago, a person purchased the book and read it and when he was with the book done, he placed it on the shelf. Then, he tripped on the plant which knocked it over and caused him to bump the cabinet, which caused the doors to open.

After imagining this scenario, it goes through other simulated scenarios as well. Each of them fulfill the requirements for final state of the room. If the scenario does not fulfill the requirements, for example if it imagines a scenario with a horse instead of a man, it throws that scenario out of consideration, assuming that the simulation of the horse is incapable of producing the final room state.

In fact, if the horse simulation can produce the final room state, it's allowed to stay in the list of possibilities, even though you and I might still find it ridiculous.

The point is, the AI knows the rules of the world. It knows what a book is and why it might be in the room. It knows the force required to tip over the plant rather than send it flying out the window. It knows that open cabinets that are usually closed, most of the time.

After billions of permutations, it might come up with millions of scenarios that it can pass along to the next AI to sift through.

The next AI might have different information. It might have ways to exclude some of the scenarios. Or it might have a way to combine them and identify probabilities. Its task is to whittle down the millions of permutations to thousands so that humans can get involved.

Then it shows the human two recreations of the scenarios and asks which of the two scenarios seem likely. After a few interviews, it starts all over again, with these inputs.

When it has performed this full iteration a few times, it ends up with a few likely scenarios.

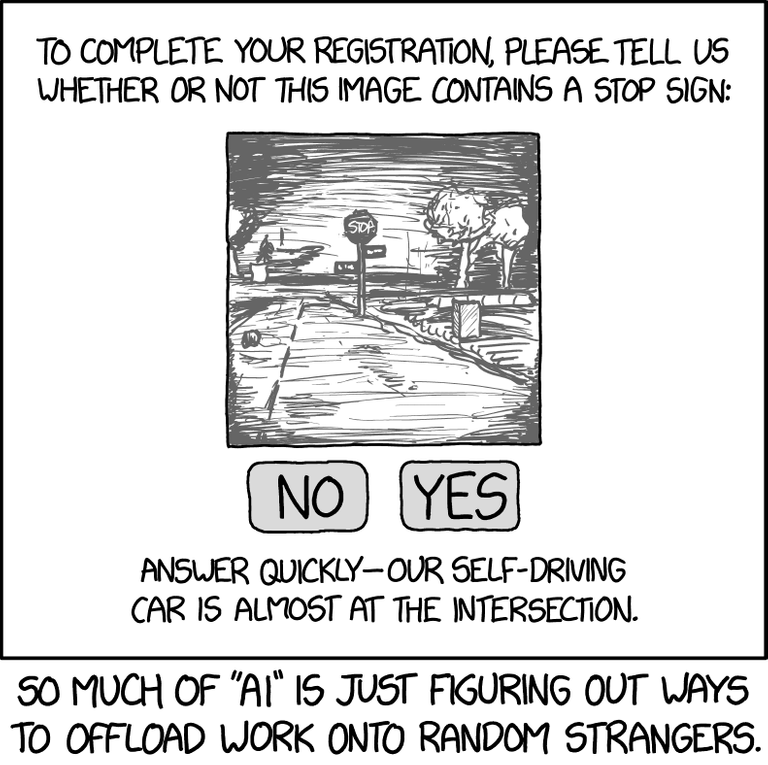

What I described above is how a lot of science is being done these days. Though it's not being used in forensics (yet), it is being used in astronomy to guess the likely cause of celestial phenomenon. It's being used to produce new kinds of semiconductors. It's also being used to train self-driving cars.

You know. Fun stuff like that.

"Crowdsourced steering" doesn't sound quite as appealing as "self driving."

@tipu curate

Upvoted 👌 (Mana: 10/20)

I had never thought about AI stuff like that. That it will be used to figure out the most likely way a crime happened. So cool and a bit creepy at the same time if it was possible to manipulate the AI against or towards certain people.

And the car example brings new meaning to filling out those captchas!

That leads to a good question. Would it ever be acceptable to bring AI determined evidence to court as facts? Would these determinations be admissible? Would they be subject to cross-examination?

Or would they just be used to aid investigators in exclusion like DNA evidence?

Yeah it's hard to know if it will be accepted as fact or as close to fact as possible, in interpreting DNA or other factors in a case. It's harder to cross examine a computer than a blood spatter expert or other expert witness.

If the code written and whatever data has already been seen by the AI was publicly available then in theory the public or judges could tell if the AI is unbiased.

I think if it was allowed into criminal trials in a country there would be a lot of scepticism before it was widely accepted as providing more accurate or fairer outcomes and fewer false convictions.

I could see it being used by police for interrogation "our AI says 90% of the time your alibi won't be believable to a jury given the evidence at this part of the room, so confess and cut some time from your sentence". I suppose that would depend on it being allowed to be used for such purposes.