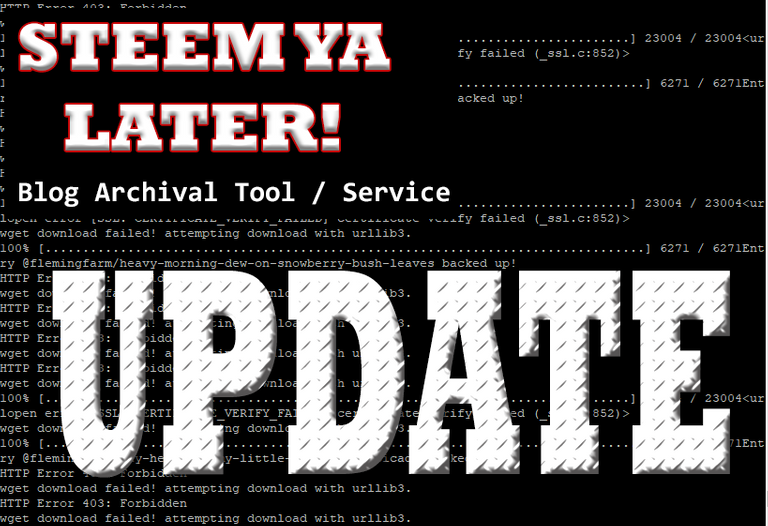

SteemYaLater Update: Logging, Results CSV, Certificate Validation, MD5 Image Checksums and More!

Now, we can backup up your Steem Blogs + Images with a bit more sophistication

I was up until the wee hours last night tinkering with the code that and had successfully backup up @saboin and @steemseph's blog and images so they may be safely stored for posterity in the medium of their choosing. Sure many would agree, our Steem blogs and our images are precious so glad I can do this to help people take preserving their data into their own hands.

To get the word out about the soon to be launched service, I will be offerring a complimentary backup job for the first ~3~7 resteems.

All 10 slots were filled yesterday. Thanks yall!

During the process, a few shortfalls were identified:

- Some of yall are some blogging machines!

Being that the script is designed to indiscrimately download every image to include those that are repeated in other entries. This can result in great duplication of data and hefty file sizes of the parent folder. For perspective, @steemsephs was around 2.3 GBs of data. Thankfully, my cloud provider allowed that amount but I suspect some blogs we may have to split into chunks for transfer.

To prevent duplication of data, I am devising a system of first collecting all file hashes in the folder structure of the past backup (sometimes it takes multiple passes with the script due to various front end limitations) and comparing it to the hash of the remote file before saving another copy to disk. Ideally, we would want to prevent the download from occuring in the first place but, as of present, I am unsure if there is a mechanism to obtain a remote file hash without downloading it. The prime consideration is to reduce the size of the block archive and we can work on other issues from there.

- Front End Limitations - Throttling / Rate Limiting

It has appeared that we encountered a bit of issues with possible rate limiting or throttling of our HTTP GET requests after what I suspect to be too many concurrent requests. To mitigate this, I have implemented a short sleep interval prior to initiating each request; however the better option would likely be to set limits to the urllib connection pool. I will have to reference the documentation to figure it out.

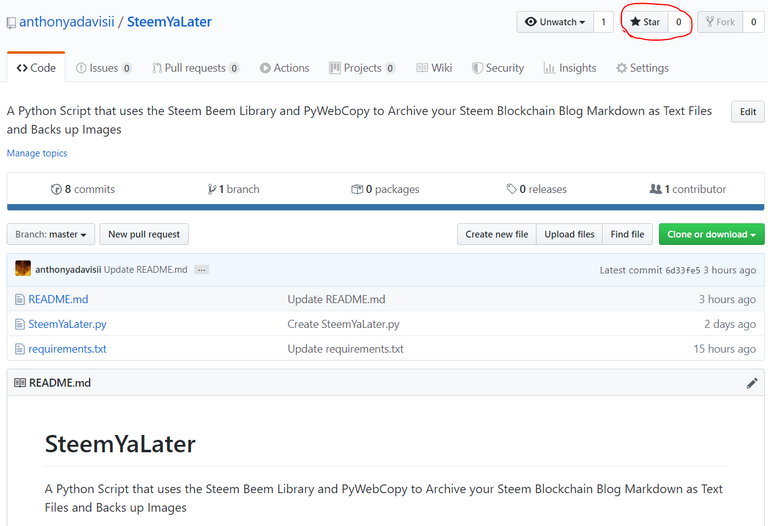

Repository: https://github.com/anthonyadavisii/SteemYaLater (Will be updated once data de-duplication changes are implemented)

Would highly appreciate any stars and follows on my Github if you appreciate what I am doing & direction.

Additional features added:

- Logging

- Results to CSV

This means will be included in the package so you may identify the images that the script was not able to obtain that you may attempt manual downloads as needed. - SSL / TLS Certificate Validation

Reading the documentation. I realized this was a recommended feature for security. I updated the readme.md to reflect the steps to download the required Python module. This means web server certificates will be validated to mitigate the potential for MITM attachks(Man in the Middle).

I am not too familiar with what kind of malicious payload could be executed from inside an image if any. I do recall an art known as steganography whereby secret messages are somehow hidden into an image. Perhaps, it could be via metadata or patterns but it pretty interesting methodology.

- Get File Hash Function

This function facilitates obtaining local and remote file hashes which will facilitate minimizing the data duplication as stated previously. It has yet to be fully tied in but I did test on one of @flemingfarms images and the hash is consistent. I read that the blake2b algorithm may be faster and more secure (less prone to collision) so I may opt for that in the future.

- Batch Operations

Considering the 10 resteem quota was met, I have my work cut out for me. Instead of onsie twosies, I decided to modify things into a function that can receive accounts or account as the paramater. If accounts are not specified in the script, the typical user prompt will occur. This will hopefully make obtaining everybody else's backup less time intensive.

Looking forward to completed the backups for @flemingfarm, @jacobpeacock, @cadawg', @jacobtothe, @freebornangel, @jlsplatts and @oguzhanon. I got my fingers crossed that they are not super image dense but, nevertheless, we will find a way ahead. 😅

P.S. I'm gonna need to mount a new VHD to my VM. I checked and had 4 GBs left. Whew!

Thanks for stopping by!

How to put your FREE Downvotes to work in 2 easy steps!

This post was created using the @eSteem Desktop Surfer App.

They also have a referral program that promotes users to onboard to our great chain. Sign up using my referral link to help support my efforts to improve the Steem blockchain.

Ditch Partiko and get eSteem today!

| PlayStore - Android | Windows, Mac, Linux |

|---|---|

|

|

| AppStore - iOS | Web |

|

|

Thanks for mentioning eSteem app. Kindly join our Discord or Telegram channels to learn more about eSteem, don't miss our amazing updates.

Follow @esteemapp as well!

According to the Bible, Bro. Eli Soriano: What really happened to Joseph Smith?

Watch the Video below to know the Answer...

(Sorry for sending this comment. We are not looking for our self profit, our intentions is to preach the words of God in any means possible.)

Comment what you understand of our Youtube Video to receive our full votes. We have 30,000 #SteemPower. It's our little way to Thank you, our beloved friend.

Check our Discord Chat

Join our Official Community: https://steemit.com/created/hive-182074

Bruh do you even Bible?

Your religion thinks Jesus is the BROTHER of Lucifer! Well chew on this.

All things were created BY HIM. Including the fallen angels. Enough with the spam. You're not making converts. You're making a joke of yourself.

False religion is so cringe. Flagged for spam.

Funny thing, I grew up in that.... LOL Joseph Smith was a “crystal Gazer/fortune teller” His father was kicked out of multiple churches for his witchcraft.... ummm ya... weird!!!

Did I resteem it fast enough? I have always been after something like this!

You got it!

Woot woot, you da man!!!

Very cool and thank you! Super handy and there are a lot of people who will really dig having this ability for a full backup. Always been one of the things I thought should have been an integral part of our accounts.

I know right! Perhaps, if I can refine it enough the front end owners may consider integration. Worth a shot

Resteemed, but obviously I don't need a duplicate backup contest entry!

Ok so it's still open one more. Hopefully they see this. Maybe i should update and say 4 to spare the confusion.

Thanks btw

I am glad to see that you are making progress!

Hello!

This post has been manually curated, resteemed

and gifted with some virtually delicious cake

from the @helpiecake curation team!

Much love to you from all of us at @helpie!

Keep up the great work!

Manually curated by @solominer.

@helpie is a Community Witness.

$trdo

!COFFEEA

For you

Congratulations @avare, you successfuly trended the post shared by @anthonyadavisii!

@anthonyadavisii will receive 0.13170600 TRDO & @avare will get 0.08780400 TRDO curation in 3 Days from Post Created Date!

"Call TRDO, Your Comment Worth Something!"

To view or trade TRDO go to steem-engine.com

Join TRDO Discord Channel or Join TRDO Web Site

Congratulations @anthonyadavisii, your post successfully recieved 0.131706 TRDO from below listed TRENDO callers:

To view or trade TRDO go to steem-engine.com

Join TRDO Discord Channel or Join TRDO Web Site

Hola @anthonyadavisii.

Tu Publicación Ha Sido Votada Manualmente Al 25%.

Amazing

Thanks for your work on this project.

This is great news! I'll be using this for sure! Thanks man 😀