The Algorithm did it... The Code is Law.

I am a programmer. I was a programmer before I was really a writer. I was actually a programmer before any of the things I consider myself good at. I was a decent Dungeon Master before I became a programmer but that only predates my first experience programming by a year or so. I was VERY young.

My first computer had 2K RAM. To put that into context this post would likely not have fit into its memory. Imagine trying to make simple games within such a constraint. That is where I began my adventures in programming.

I then moved onto 16K, and then 64K which was my main area of operation for many years, and later my computers kept rapidly being built by myself with more and more RAM. The machine I am currently writing this on has 32GB of RAM which strangely enough I don't feel the need for more at the moment. Some of the servers I build and deploy for work have 96GB of RAM. When I was younger we constantly were running into barriers and limitations due to running out of RAM.

This lead us to design things as compact and efficient as we could. Later on I'd begin exploring the internet and we had modems which were measured in BAUD rate. My slowest modem was a 300 BAUD modem and I can type faster than it can display information.

In that era I was heavily focused on determining how to do big things within the limits of the BAUD. As time progressed BAUD as a limitation started to fade and this made things like HTML very successful because while it is not compact and efficient in terms of space it is very easy for humans to read and use.

One of my passions was AI. I was particularly fond of trying to mimic spoken intelligence. Consider it early aspects of things like Alexa, Google, Siri, etc. I was actually inspired by things like Eliza, and Racter.

My earliest attempt was actually quite the stupid and simple program I called "The Cutdown Machine". I was probably 13 or 14 when I wrote it. That'd put it in the 1983 or 1984 time frame. I remember losing cutdown battles with some of my "friends" who sometimes were not that verbally friendly.

The program was stupid and had absolutely no intelligence. It would take the things I said to it, or my friends said to it and stick them in an array of strings. It would then randomly slam different strings together and spit out a response. It was often gibberish and complete nonsense. Sometimes it was amazingly funny though and would have us crying we were laughing so hard. I also found that I could mimic that approach myself after that and come up with some pretty original and funny cut downs. I didn't even have to use profanity to do it.

That was in no way intelligent. It wasn't even what we call an expert system. Yet by sheer luck of randomness sometimes it could appear to be so.

I would read about Eliza in a Scientific American, Omni, or some other magazine of the time. Where I lived I tended to know quite a bit more about programming than any available teachers. It was also pretty remote so there were no BBS systems that could be reached without paying expensive long distance phone calls. I lived what other people were doing through magazines and occasional PBS documentaries on television and such.

I'd hear about things, or read about them and if I wanted to see what they did I had to attempt to make them myself.

Mimicing human speech like Eliza does in the quest towards a program that can pass the Turing Test (computer which when spoke to through communication devices is indistinguishable from humans) ended up being basic or complex. There were many approaches.

I quickly realized it was not truly aware of anything. It was programmed to react to certain words, and certain word positions. If you designed it well you could actually fool people for quite some time with just this technique.

I started wondering about ways to make something aware of things when the sentence structures varied. I played around with basic logic and imbuing them with logic. Things like telling it "A Mammal is an animal". "A mammal has hair". "A human is an animal". Then being able to ask it "Does a human have hair?" to which with the information I provided it would say "I Don't Know" or some other sentence I designed to act like a person who doesn't know something. Perhaps I might make it try to change the subject. However, if I told it that "a human is a mammal" and then asked it "Does a human have hair?" it would respond yes, because I previously taught it that a mammal had hair.

The first time I wrote this I did so in a BASIC programming language on an Amiga 1000 which did not have support for recursion. I thus had to mimic it to some degree for traversing the logic tree. I decided to put a limit to how deep it would search. If something was far enough removed in the number of hops to get the answer then it might still say "I don't know".

I tweaked this a bit and had it so if you asked a question such as "Does a human have hair?" and it had to traverse to the second level to find out a mammal has hair then it would automatically create a link for itself "Humans have hair" on the first tier and thus shift the knowledge more to the top of what it knew about humans.

This worked well... It especially worked well when I decided to make it dream. If you didn't talk to it for awhile it'd go into dream mode and grab words and ask itself questions about other words. If the questions lead to something several levels down in the recursion the dreaming would create a level 1 link and thus it actually kind of learned while it was dreaming. I could come back and ask it more complicated questions about things very far removed and it usually would have an answer.

Was it alive? No. Was it self aware? No. Could it operate outside of what I programmed it to do? No. Could it fool people? Yes, sometimes... Was it Artificial Intelligence? No. It was not intelligent. It was simply what is called an Expert System. A system I designed within rules (aka algorithms) for how to do very specific things.

It could give an illusion.

Back then we didn't have something like google, the web, etc. If we had I could totally imagine turning something like my system lose on the internet and letting it read web pages and learn new logical connections all on its own.

Yet even with that it'd still be trapped within the confines of the rules. It could potentially answer questions. It might be a good tool. Yet it would not be intelligent.

I was also completely aware of the concept of an algorithm. This is a word that people use to cause people to stop questioning. They use it to try to shift the blame. They use it to justify actions. They count on the fact that most people are completely ignorant on what it truly means...

I would like to dispel that.

Algorithms

Algorithms are essentially recipes. They are a set of steps to follow to accomplish a specific task. Much like when you cook using a recipe, or you assemble something using an instruction manual. Algorithms will accept input that they can react to and they will provide their results in output. What is important to know is that programs are essentially just collections of algorithms.

If you create an algorithm to alphabetize names (aka sort) then you can reuse that algorithm within a program, or within other algorithms anywhere that you need something alphabetized. You build a little tool that follows instructions.

The key is that it was built by a person. A person knows what it will do. If it does something then it is very likely BY DESIGN and INTENTIONAL.

(Image Source: quora.com)

As things get more complicated there can be outcomes that were not expected. Sometimes they are good. Sometimes they are bad. When they are bad they are often called a BUG.

It can be helpful for you to imagine if it was your job to do what an algorithm does what steps you yourself would do. If you try to do that it can demystify some of the excuses that you'll encounter.

(Image Source: code.org)

Sometimes the unforeseen aspect of the algorithms or combination of algorithms will be that it is somehow exploitable. This is how security breeches and other things usually occur. It is how computer viruses tend to operate though such things often also play upon expected repetitive human behavior.

We tend to fix these exploits when their outcome is considered bad? What do we see when the exploit granted someone power, or wealth and they are in a position to protect a FIX from being implemented? Perhaps we might encounter the phrase "The Code is the Law".

Considering how I've been explaining the operation of algorithms you should really think about that for awhile.

Code is Law

This is something that is a popular umbrella to hide beneath. It tends to only become a mantra when there are people that are benefitting from exploiting or taking advantage of some aspects of the code/algorithm/program and they don't want that "fixed". They may even say it isn't an exploit. "Code is Law". Often the only advantage they have is that they noticed it before others, took advantage of it, gained power/wealth/advantage in comparison to others and they don't want it closed. If they were not first to the exploit they might be in the camp of people that see it as a flaw or a bug.

If it was explicitly stated in the design documents that this exploitation was a desirable outcome then it would indeed not be a flaw. If on the other hand it is not mentioned in the design documents then it is likely it is just an exploit, oversight, flaw, or bug.

It becomes a problem when "Code is Law" becomes the chant to protect a flaw because it has insured those who discovered it will remain in power, gain power, and potentially have gained enough power to prevent others from being able to take advantage of the flaw as they did. At this point it is about power. It is not about ethics, morality, or any interest in other people.

Sometimes the Code is Law within a system if what is occurring was explicitly described in the design documents.

I can assure you I've read white papers for some projects where what people now say "Code is Law" was not only not explicitly listed but the rest of the document had a message that seemed to indicate such a thing would be destructive to the intended goals.

In this case "Code is Law" can corrupt and destroy a project...

Furthermore, code can be changed just as laws can be changed.

"The Code did it" is usually a lame excuse. Fine. The code allowed it. Does that mean we shouldn't fix it, improve it, challenge it, ask questions, etc.?

The Code Did It

In the past half decade we've seen an awful lot of censorship, banning, etc. It has often been blamed on algorithms and people just accept that as an excuse and move on.

Algorithms are programmed by people. If they are targeting specific groups at a much higher degree of scrutiny and/or censorship then that is by design. A person or group of people had to design the algorithm to facilitate that and they had to tell it what to look for.

It is a lame excuse and it is an excuse none of you should accept. It is people preying upon the ignorance of the population when it comes to computer programming.

What about machine learning?

Programs are built from collections of algorithms. When we have machine learning we take things such as alphabetizing and we allow the program to try to randomly come up with new code to improve upon the speed of the alphabetizing. Some code will fail and be discarded. Other code will work faster and will be what it uses until it finds something else. It is kind of evolution targeting specific algorithms.

This can help with word recognition, facial recognition, and many other things. It is still just algorithms strung together. It is not awareness. It is not Artificial Intelligence.

It is expert systems mimicing intelligence and giving an illusion that they are intelligent simply because they accomplish tasks that we tend to use our intelligence to do ourselves.

Being ignorant about this subject has been weaponized and is often used by those pushing the propaganda to con the population.

They use these concepts the same way they do when they say "Experts say", "Scientists say", "Doctors say", "My priest says", etc. It is an argument from authority fallacy.

These algorithms are designed by people, and may be made more efficient through machine learning. What they choose to conceal, censor, etc. That is dictated by humans. It is NOT the fault of the algorithm.

Code is Law applied to real life...

I can pick up a rock and smash someone in the head. The code of reality allows it. Does that mean I should do it? Does that mean if I do it that it is acceptable?

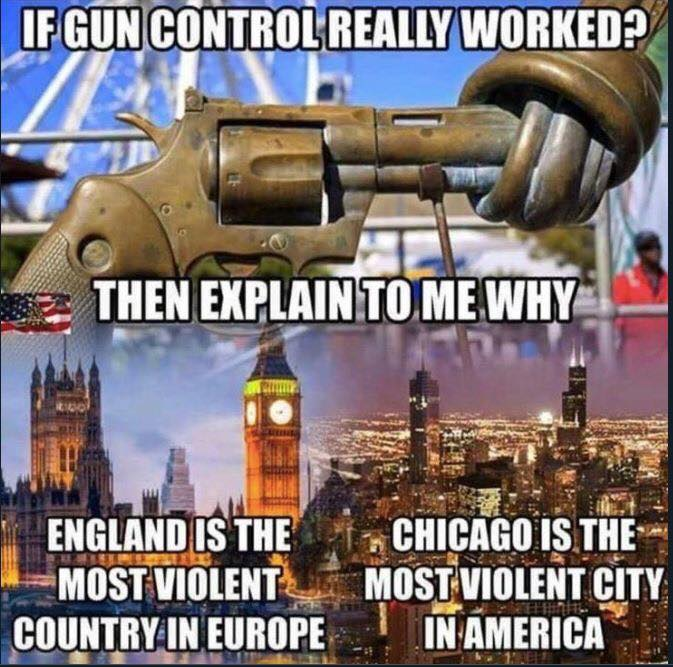

(Image Source: thepoliticalinsider.com)

How is that Gun Control algorithm working out for you Chicago? Compare places with high gun control algorithms to places without... you'll see a trend. It won't be the narrative the news is pushing.

EDIT: An interesting thing about guns. They have been called the "Great Equalizer". A gun does not care how wealthy a person is. A gun does not care how big or small a person is. It is a tool that works equally for all people. Do people abuse it? Yes. They tend to be brought to justice when they do so. Places with the most guns tend to be VERY polite. Places with the most mass shootings tend to be places where the criminals (i.e. they don't care about gun laws) know that only police and others that will take awhile to respond will be armed and can stop them. Soft and easy targets. The guy that did the Pulse Night Club shooting went by several other targets and passed them due to security until stopping at the Pulse Night Club. Anecdotal? Sure until you study the actual statistics.

there was an off-duty officer, armed, serving as security at Pulse as well.

Yeah. Did the shooter see him? Everything I read indicated he had gone to at least two other night clubs and moved on due to obvious security.

what did you read? i'd love more resources.

Good luck with that. I read it probably two or three years ago at this point. Could I find it? MAYBE. Do I want to spend the time looking for it? Not really.

You don't have to take my word for it. I'd start searching and probably keep hunting around. It could have even been more than three years it all blurs together after awhile. So much has gone on since then.

This is not intended as smart ass. I just bet that would not be easy to find again. Who knows it might not be so bad though.

Actually it isn't as hard as I thought...

In duckduckgo.com my first search turned up some things... I didn't dig deep and it likely is not the only thing like this.

I used the following keywords without quotes "pulse night club multiple targets"

looks like that's a take from the prosecution against Noor Salman, a case that eventually fell apart. now, did Mateen case Disney Springs and not like that cops were there? probably. did he ultimately decide to go to Pulse? certainly. but i don't think that says exactly what you want it to say.

Like I said... years ago. I'm not going to do the research. I wasn't even planning on doing that much but I did anyway. :)

You can trust what you trust. I'm not likely to change that.

I know gun control is bullshit and you'll likely not change my mind on that. :) (Not impossible, just highly unlikely) I could get behind mass gun education though. People that don't know how to handle them or be safe with them can be very dangerous even when they don't mean to be.

As to the details of the event you might. Yet ultimately it isn't important enough to devote time trying to find it again. It is also possible I am completely misremembering it. I make mistakes.

I do get somewhat tired of seeing that phrase on Hive, when people try to justify their anti-social behavior.

The down vote is a tool, and I feel it is mis-used far to often, yet when down voters are confronted the response is 'code is law'. I am not anti down vote, they are needed, but they are still misused. And people do not want the algorithm fixed, because they would lose some power.

The definitely does appear to be the case.

That meme isn’t even close.

Chicago not even in the Top 10?

Detroit FTW!

England isn’t right either. Russia and Ukraine both have much more violent crime. And even if excluding Eastern Europe, don’t France and Belgium have more violent crime per capita than the UK?

Yeah. I think Norway oddly enough had more mass shootings than England. :)

The meme was something I quickly found. I think it is more about gun crime rather than simply violent crime. Violent crime can include a lot more than just guns. Yet I still don't think the UK is at the top.

Baltimore is also pretty bad in the U.S. right now. Most leftist controlled cities with stricter gun control are worse than the places without it. That might be true across the board but I won't say that is the case because I haven't taken the time to check each and every one of them.

EDIT: I do know that if you do the research the U.S. is not the top when it comes to mass shootings.

Also if your curious. I almost always add images after I've written the post. I try to find some that work well. In this case there are definitely flaws with that meme but I think the message I wanted from it is there.

you wanted a flawed message, got it.

Nah... I Just don't nitpick if I don't like the narrative. Also I consider my time valuable. I don't think is my duty to go do research for other people. Sometimes I may. Sometimes I may not.

Whether I wanted a "flawed" message or not did not enter my head. I also don't see proof that it is flawed. That'd require more research. You are the one hung up on it... so search, or don't. That is up to you.

this is what i'm talking about. the meme is flawed and you decided was good enough for your argument. that seems sketchy to me, and i'm noting that for the blockchain.

The message I wanted was about the FACT that the places with the strictest gun control laws seem to have the most problems with gun violence.

Sketchy.

Only if you treat the narrative pushed by the propaganda machine as dogma.

I'm going to try one last time with you...

I wrote my words. Those are what I wanted to say. Then I went and quickly looked for images.

If you are going to be petty and look for B.S. reasons to point at something then you will almost ALWAYS be able to find such reasons.

I write what I mean. I don't try to find secret little implications for you to need to look for. No mind reading is required.

If you think you need to seek out escape hatches to ignore everything else someone else says so you can justify the mental spam filters being engaged then in most cases you will be able to find them.

If that is NOT what you are trying to do then I'll still extend you the benefit of the doubt.

If that IS what you are trying to do and many people actually do on a regular basis then I think that makes you foolish.

You don't need to seek little things to attack. Look at the whole.

All of us have little things we disagree on. If that is all we focus on then nothing is accomplished and it is a waste of both of our time.

It is also pretty telling that when I do the work you seem to lazy to do and actually looked up the things about the pulse night club you wanted... you shift. Let's find something else for you to poke at.

Give me a break.

Here a little more quick searching... just how lazy are you that you demand I do this for you. It is not that hard. It is pretty annoying when people don't like something and make demands but they are unwilling to do the least bit of searching themselves.

From: Some Huffington post article - this is the first time I've read it - it's huffpost so take it with a grain of salt.

That was just one of several articles that talked about increased security and the security response or lack of security response. A lot of different messaging.

This is no longer hot cutting edge news so I am sure you can find plenty more to read if you are that concerned about it.

And for the record I am not saying what Trump stated about this. I am explicitly talking about the scouting out other locations before Pulse and not choosing them due to the obvious presence of security.

In the case of Pulse it doesn't seem like security that was there was particularly fast at responding. Thus, the reasoning by the lawsuits.

please don't make assumptions about me, a stranger on the internet. you're likely to bend yourself into all sorts of shapes if you think you need to hold my hand here. i can research perfectly well, but it also pays to know what sources an author is using. i didn't know much about this case beforehand, but your casual mention of it and then general appeal to "the actual statistics" felt off somehow.

this article from the Orlando Sentinel references the OPD's record of events: the off-duty officer exchanges fire with Mateen within minutes of his arrival at Pulse, and then calls in additional police support.

the official timeline of events used in this review by the National Police Foundation, states (page 21) that the off-duty officer engaged with Mateen within a minute of the first shots being fired.

so, had Mateen gone to the other locations he looked at and not seen security/police for some reason, would he have gone there? i don't know, maybe. seems like he wanted to shoot people. i'm sure he'd find targets eventually.

but that still leaves the question, what were you trying to say?

I thought that would be pretty clear. He didn't go to the first two places because he saw the heavily armed security. He did not see that at the Pulse Night Club.

Gun Control Laws do not stop people who plan to shoot other people. They only disarm honest law respecting people. They create an area where the people who DO plan to commit crimes know that the only people who can defend themselves from them are law enforcement who typically have a pretty long response time. Definitely usually much longer than it takes for them to shoot, rob, etc. people.

Gun Control Laws ONLY hurt law abiding citizens. They don't protect them.

Pulse night club example was an example of the shooter NOT going somewhere he knew someone armed was there to shoot back.

Did he plan to kill people? Most definitely. That I do not dispute.

Did he use a gun? Most definitely.

Would gun control have stopped that? Typically not at all. It actually makes it easier.

Criminals don't give a damn about gun control laws.

It does however disarm people that might be able to defend themselves and others.

why is this a good example for your argument? he purchased his guns legally and others with guns engaged him very quickly.

i was hoping for more talk about algorithms, honestly.

Yes. He purchased them legally. He wanted to shoot people. Shooting people is illegal. Do you actually think he would be stopped by gun control laws?

What would you like to know about algorithms?

Do you wish me to clarify why I brought up gun control or specifically what would you like to know?

I think if you actually wanted to know how Gun Control was remotely relevant that would have been a good question.

Just search engine. I provided my quick searches. As to where I read it/saw it/heard it years ago when this happened. Sorry I don't have perfect recall. Do you?

EDIT: I've also seen people discard sources because it is "I don't trust X", "I don't trust Y". To be honest I don't trust ANY source completely.

but let's trust those "actual statistics."

Did I say I did? Kindly show me. Again you seem to be looking for an escape hatch.

Why do you twist and turn and look for any corner to scurry into to try to nitpick and escape?

What is wrong with looking at the entirety of something?

EDIT: Some might call it cognitive dissonance. To me I suspect it is just a bad habit. Deflection. Looking for any reason to say "See... look here... look at this one thing... no ignore everything else... let's just point over here in this corner..."

i only have your words, as do other readers. i'm certain you use those words on purpose. you seem very careful. but if asked about them, you say otherwise, and accuse me of trying to manipulate you or run away.

i very much liked most of this article and i wanted to ruminate on it a bit, and the EDIT at the end with the offhand comments about guns left a sour taste in my mouth, along with the meme. you made those choices.

I would have loved to have heard your thoughts about the article.

Instead you wanted to me to focus on the Pulse Night Club. You asked specific things. I actually searched for you showing you how I searched. You then responded to the search dismissing the one thing I found. I searched again.

At that point you switched and focused on another thing.

I spoke on that.

Then you decided to indicate that I was trusting the statistics after I said I don't trust anyone completely.

You jumped from one little thing to nit pick to another. You deflected.

I do think you may have enjoyed the article. I'd actually welcome your ruminations.

I simply don't welcome people looking for escape hatches and focusing on certain things and ignoring the rest.

I took your approach at first as being honest and not doing that. I only noticed the pattern after you kept doing it.

Now I do see you THINKING here. I do think what you say is honestly what you think. I do not think you are lying.

I just don't think you see what I am pointing out yet.

I think you will...

So now I'll do what I have told people. When I am implying something I will state that I am. I am about to imply something.

Enjoy the seeds, may they grow interesting things.

This makes sense. I could see you engaging as such. Yet I stand by those words. If the truth leaves a sour taste then hopefully you acquire a taste for sour things.

Perhaps they were not offhand at all. Have you stopped to think how they might be relevant to the rest of the article?

I will offer you an apology on one front. I realize I am being rather harsh. I can tell you I don't enjoy it.

I am just done tip toeing. Things get worse when we try too hard not to offend, to be soft, etc.

I know it sucks. It does for me too.

I can tell you I wouldn't bother at all if I didn't care.

I don't like trolls. I consider it a waste of my time.

If I didn't think it was worth it I wouldn't talk to you the way I am.

I do not think I am superior to you. I don't think I am more intelligent than you. I am simply different than you. This is a good thing. It'd be a very bad thing if you were ME.

Be you. I also can tell you have a strong mind.

I pointed out that habit because I care. I have my share of problems. I have to watch them. I am sure I have other problems I haven't even noticed.

I am not trying to be egotistical. I am not trying to be condescending.

I simply know of no way to tell you what I am trying to tell you that can avoid appearing as some of those things.

Could I be wrong about what I am thinking? Absolutely.

Am I making some assumptions? Of course. All of us do. Life is largely based upon probabilities and how we judge them and make decisions.

Can an assumption make an Ass out of U and ME... yes. Yet that is a nice platitude. Platitudes are not always correct. They are just fun to say and make people think.

Actually I am going to apologize to you. I do think I got a little too "cocky" in my response to you. I even think some of my responses were condescending. That was not my intention.

I can't justify it. I suspect it may be because I am trying to do too many different things today at once and I rushed my response.

That is no excuse. It is simply me trying to think about it. I do apologize for that. You don't deserve everything I said to you.

Thanks for hanging in there with me anyway. That says something. I'm actually a little impressed. :)

Here is my apology:

https://peakd.com/blog/@dwinblood/example-of-how-not-to-handle-a-conversation-i-was-an-ass

If you have a zit should I just talk about that or should I pay attention to what you are talking about and who you are?

You've got a bad habit. You are not alone. You are using pretty common tactics I see a lot these days. If you want to keep pounding on me I'll try to help you break it.

I am not expecting you to agree with me. That is not the bad habit. The bad habit is you frantically looking for any little detail you can latch onto, be it a word, a phrase, anything you can say "see... do you see it" while you ignore ALL of the other things that are required for the true context.

You seek to destroy by pointing out things that by themselves you can frame as a negative. This only works by ignoring everything else.

It is disingenuous and it is pretty effective when used against most people. It is not effective when people have become aware of it.

I've got your number with this technique. If you want to talk about the whole then we might get somewhere.

If you keep wanting to look for justification for why I might be wrong... you'll always be able to find that.

Would it help you if I intentionally start misspelling things? Would you see it then?

At this point I am not trying to attack you at all. I truly want you to stop using this tactic. It is destructive. There is absolutely nothing constructive in the sense of actual communication about the technique.

Look at things in context. CHOOSE to try to understand what a person is trying to say rather than CHOOSING to find things you can dissect and attack.

For the record. I can plainly see you are not stupid. I am not trying to imply that.

I do see by what you are choosing to focus on that the habit I am referring to is there. Especially as I watch you bob and weave from one thing to another while ignoring everything else.

Actually I can't think of a case where banning anything actually works. It tends to create interest. It creates a new class of criminal. It creates black markets.

I don't know of anything banned or restricted that doesn't create something like that.

Most of the people I know (actually all except 1 of my sons) drank before they were 21. Many of them drank quite heavily if they could get alcohol before they were 21.

Banned books. Usually pretty popular.

Banned movies. Usually pretty popular.

Drugs. No problem getting those. It does create a new criminal class, a lot of violence and is often where a lot of the interactions between citizens and law enforcement happen.

I actually think it should all be voluntary.

Free up some of the drug war cash and put it into creating voluntary clinics to help people that want to be helped.

Stop telling people what they are not allowed to do. They tend to get curious and want to do it. There seems to be a big fascination with the taboo.

Guns are just one thing. There are many things.

I honestly cannot think of any situation where banning has actually worked. By worked I mean had an only positive outcome.

Can you? If so I'd love to hear about it. There may be a case but I can't think of one.

I certainly don't see banning and restricting guns as having a positive effect.

It actually seems to have a negative one if I look at the statistics of places that do it.

Only reacting to your actions and what you choose to focus on.

I didn't answer this. It doesn't mean Mateen had a clue he was there. That was my point. He skipped two locations after identifying armed security.

A minute is a long time to respond in a situation like this. That would seem to indicate that security guard was not paying attention, or was not close by.

Time 60 seconds...

My version of "Code is Law" has always been "Software doesn't Lie."

People who code the software sometimes do, and they also can write code with flaws.

So while it doesn't lie that doesn't mean it is not flawed or incorrect. It does what is was programmed to do. If it is complex it can also do things the programmer didn't plan for it to do.

I refer to discrepancies between code and documentation -- the code doesn't lie. It does what it is supposed to do, and yes, you can have both sloppy programmers and ill intent.

You can also have very complex systems with a lot of input which a person not being a God could not anticipate.

As projects become more complicated the odds of unforeseen uses and such occur.

That is neither ill intent or sloppy programmers.

You just adapt and adjust your code accordingly.

True.

Interestingly enough you can make the code lie if that is your goal.

You see for something to be a lie the person has to knowingly state something false.

We could certainly write code that lies.

What the code does not do though is work outside of its programming. I suspect that is what you were meaning. I just thought it was interesting that we could actually write code to lie if that was our intention.

That is also true.

Hi tb,

Love what you wrote, I am ~10 years younger but not less a nerd and can relate to the nostalgy ;)

Hive is a classic playgroung for these "AI" games.

Maybe release a bot with the kids

Maybe in the future. I wrote one that lurked in email and on a BBS in college. I could maybe resurrect that. Not sure I'll want to spend my time that way but it is possible it could happen.

As the law of the contract says, free !PIZZA for you

@dwinblood! I sent you a slice of $PIZZA on behalf of @snackaholic.

Learn more about $PIZZA Token at hive.pizza (2/10)