Dissecting The Social Dilemma

Because of this particular one, everyone seems to be talking about one of the least favorite movie genres: documentaries.

How did I approach this documentary? With the same skepticism the creators of the tools that are turning us into "voodoo dolls", and who are featured in the film, want us to see social media. The documentary was directed by Jeff Orlowski, produced by Exposure Labs and Argent Pictures, and made available through Netflix, a platform already under scrutiny for building up a menu of content that seems to veer towards a certain liberal or progressive agenda. With all that in mind, the documentary seems a bit contradictory (assuming it is associated with that agenda) because it questions the very platforms that have allowed, say environmentalist groups, to question those who negate human impact on global warming and to gather followers of a green agenda to protest anything that threatens the environment and produce material to counter mainstream narratives.

Dissecting The Social Dilemma.

In this documentary there is obviously the acknowledgement that Social Media and technology in general, especially that involving Artificial Intelligence, have worked miracles for our generation; as well as the disclaimer that it was not in the minds of the creators of all these tools that serve social media today to serve political or business agendas, manipulate people’s will, alienate them, or create addiction.

A dilemma is supposed to mean a catch-22 kind of problem, where no matter what choice you try to make, you are screwed. The documentary’s conclusions certainly put people in a big dilemma, having to decide whether to stick to social media, knowing well they are being manipulated, or quitting and facing possible ostracism, which in turn will make them more vulnerable to manipulation.

According to Tristan Harris (Google’s former design ethicist), the fundamental problem of social media does not have to do with technology strengths but with human weaknesses. The “spell” Harris talks about we call it witchery in Venezuela. With that term we too have tried to explain or justify the massive passivity of the people that has allowed the Bolivarian revolution to destroy our country and threaten the stability of the whole continent. And here lies the fundamental strength and weakness (depending on how you look at it)of the documentary’s argument: do people have free will? They seem to agree on a big NO. It is as if whatever AI wants us to do we will do.

I can’t help but wonder, does A.I. also impair us to exercise parental control, for instance? How can parents waive their responsibility and, in the name of children’s privacy and independence, allow technology, corporate interest, political agendas, and psychos to take control of their kids?

The docu-film intersperses interviews and a full-length-movie-like dramatization that provides interesting details about the film’s argument. In the first scenes a teenage girl ignores her mother’s call to help set up the table for supper. She was too absorbed in her phone. The parents look helpless, clueless. A later scene will illustrate this better when after deciding to remove all phones from the table and put them in a time-locked container the parents witness how the same teenage girl smashes the box after just a few minutes. Why can’t a parent control the use their children make of electronic devices? More than a corporate model problem, which is the documentary’s ultimate argument, for me it is a cultural problem. One many cultures around the world have consumed and assimilated from the main content producers, whose products are being sold all over the world in different media formats.

Self-esteem is one of the main issues that comes up in the documentary and I believe that before we blame any algorithm for our kids and some adults' dependence, we should question what we are doing individually and as families to build defenses against digital or real-life manipulation.

Tim Kendall, Facebook’s former Executive, talks about the business model he helped build and which they borrowed from Google. He would later express regret that they knowingly decided to monetize on people's dependence on the platform. But, it still begs the question: don't people still have the will to choose whether or not they care about ads placed in their daily interations? In my mind, the more intrusive an ad is, the less I want that product.

Computer scientist Jaron Lanier (Founding father of Virtual Reality) has a radical solution: delete all social media now! This can and should be done in places with good economies and high human development indexes, where things not only still work, but have improved exponentially: transportation, services such as phone and Internet, free press, etc. Ironically, these are the places where it would be most unlikely for something like that to happen. In places like Venezuela where not a single newspaper is circulating and most online press is censored, where phones and electricity fail constantly and where hyperinflation has made it impossible for people to move around, a measure like that can have devastating effects.

Roger McNamee, a Facebook early investor, Aza Raskin (ex employee at Firefox and Mozilla labs,) among others, highlight that unlike the companies of the past that sold or exchanged tangible products for uses to use or consume, for the mega tech industries of today “the customers are the advertisers; we, [users], are the thing being sold.”

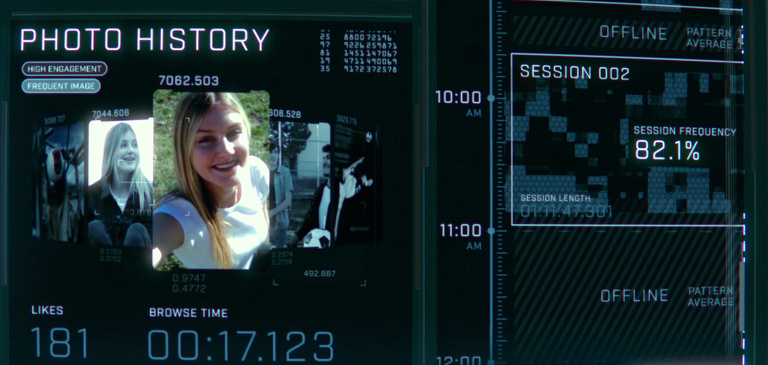

Jeff Seibert, former Twitter Executive, echoes the concept of “surveillance capitalism”, which is not that new and had been actually anticipated by most sci-fi writers, according to which “everything we do online is being tracked, watched, and measured.” It is a chilling realization, though, to have the certainty revealed by the very Dr Frankensteins that created the monster that looked at us from the other end of the screen, everything we look at and for how long! They basically know our feelings, and based on our habits online, can generate options that seem to us like choices we make, but which are in fact their driving us into predictable places based on our habits and states of mind.

Sandy Parakilas (Facebook former operation manager) clarifies the old data-sharing assumption:

“It’s not that our data is being sold. They build models that predict our actions, whoever has the best model wins.” Thus, every person connected to this massive AI machinery becomes, in their words, a voodoo doll that is individually manipulated by algorithms.

These algorithms have three goals: Engagement (keeps you scrolling), Growth (keeps you coming back and inviting more friends), and Advertisement (makes sure that while all that is happening someone is making more money through ads).

|

|

According to this, Lanier argues, this generation, which prioritizes online connection, is being financed by a “sneaky” third party “who is paying to manipulate” whoever gets connected. Communication and cultures ultimately means mere manipulation.

How do they do it? According to Harris, this is not different from magic.

But about that I’ll tell you in the next post because this is getting too long.

Thanks for stopping by

https://twitter.com/yagguaraparo/status/1317291822113296386

I need to come back and read this properly. I have not seen it, and not sure I will. Coming back to read in more depth and comment.

Posted using Dapplr

Thanks :)

I am coming back to read this properly!

Posted using Dapplr

Your post has been voted as a part of Encouragement program. Keep up the good work!

Dear reader, follow and support this author, Install Android: https://android.ecency.com, iOS: https://ios.ecency.com mobile app or desktop app for Windows, Mac, Linux: https://desktop.ecency.com

Learn more: https://ecency.com

Join our discord: https://discord.me/ecency

Thanks for the support

Lo veré para poder dar una opinión justa. Gracias por compartir @hlezama. Este tipo de publicaciones ayudan a abrir los ojos. aA veces nos sumergimos en una rutina tipo burbuja y no nos damos cuenta de los agentes externos.

Por supuesto, Eva. El documental vale la pena verlo. Es polémico y será cuestión de tiempo para determinar que tan acertado o bienb intencionado era.

And here lies the fundamental strength and weakness (depending on how you look at it)of the documentary’s argument: do people have free will? They seem to agree on a big NO. It is as if whatever AI wants us to do we will do.

We've been working on this "problem" since at least 2001,

Fascinating. I had not heard of that game. Do the dialogue takes place while people play that game or is it some introduction to the game? I am not into games, so, please excuse my ignorance of how they work.

Now, does your use of quotation marks in problem mean you do not see us as deprived of free will? I want to believe we can always have a say; we can choose whether to believe the fake news or not and whether to act upon it (and how) or not.

The scene is from the end of the game.

Well, mostly it's not a real "problem" because it's shockingly easy to distinguish FACTS from OPINIONS.

FACTS must be empirically demonstrable and or logically necessary QUANTA (emotionally meaningless).

OPINIONS must be personal, experiential, unfalsifiable, GNOSIS, private QUALIA (emotionally meaningful).

In 23 seconds,

Hahaha. I loved that movie. Did not remember that part, though. Now that you bring it up...

The thing is that in this information age (which in the documentary is called the disinformation age), we have people presenting facts about, say a successful treatment for covid-19, and 5 minutes later we have another source offering fact/stats/cases saying exactly the opposite about said treatment and offering either a different one, based on some more facts or a helpless scenario according to which no treatment works 100% yet. It's very frustrating not to be able to fat-check some of these sources and when we see, especially among the scientific community, this kind of discrepancy we feel really lost.

I know lots of people who have opted for disconnecting themselves from any news or social media feed to just keep their sanity and peace of mind.

I think the whole "problem" is just an excuse for CENSORSHIP.

It would be extremely simple to teach school children the difference between FACTS and OPINIONS.

Congratulations, your post has been upvoted by @dsc-r2cornell, which is the curating account for @R2cornell's Discord Community.

Enhorabuena, su "post" ha sido "up-voted" por @dsc-r2cornell, que es la "cuenta curating" de la Comunidad de la Discordia de @R2cornell.

Thank you very much, @dsc-r2cornell. I appreciate your support.

I haven't seen this, and I'm not sure I shall. I do think though, and from other comments, not dissimilar from yours, that the issue remains how people make use of the social media. A healthy skepticism is really important and an awareness of what an online presence means - in every sense of the word. I do also know that if a services is "free", it isn't really. Like a "free lunch" there will always be some sort of quid pro quo and one needs to be alert to what that might be.

I am not in favour of charging for news shared on the social media - as is being proposed in Australia. I am only too aware of the need for free speech and a free media and in countries like yours and mine, we need both. Social media are vital to the dissemination of information for a slew of reasons. The challenge is stopping the spread of fake news which, of course, is much bolstered by a particular US presidential hopeful.

The challenge, when it comes to children is not the children but the parents. That a child gets to a point that violence is used to access cell phones means that there is a deep rooted problem and, IMO, that began with the parents.

Now going to read your second installment!

Haha. Good point. I'll get to that in the next post. As I mention in Part II of my commentary, it isn't until the main character of the dramatization gets involved in politics that the real tension of the film emerges and there is the implicit suggestion that measures must be taken.

I think that social media has allowed all angles of the political spectrum to express and create communities. Of course, as it happened with the written press and Television, those with more money and power will tilt the boat their way. So, removing the youth from the screen not so much because they may get sick and even hurt themselves, but because they are going to get contaminated by the wrong political ideas (now that other means of communication/sources of information are disapearing or are beings absorbed by SM) may be challenging and counter productive for any political agenda.

Hello @hlezama,

I tried to find some answers on what you discuss in this article. I do not find it too long :) - because I am writing long comments and posts myself :) I think you point out important issues, in particular on "passivity". To make the read more convenient, I used titles.

Physical social life is just experiencing the climax of its extinction.

This development began long before the internet, it began with urbanisation and the large, resulting anonymous masses of people living side by side in apartment buildings and not knowing each other. Anti-social life took place through the capitalisation of each individual person and his or her labour force by means of the division of labour and the insignificance of families.

The way of life, dominated by paid work, made traditions and family ties shrink to superficial gatherings, and conflicts come to light in these rare gatherings at Christmas or other holidays, where people realise that they have become fundamentally incapable of having deeper relationships.

The individual human being, in deepest dependence on paid employment and at the greatest distance from his family of origin and his birthplace, has no real individuality.

Things that have been sold to one as "self-realisation" and "independence" are an illusion. Created through advertising, first in books, then in all later forms of media, which give ideas to the mind but leave the body inactive. ... I very much agree with what you said about ads.

Where no one is related to anyone else, only temporarily artificially created identities can emerge, depending on where one finds gainful employment. Reliable long-term relationships are impossible to achieve on this basis.

Companies (and other things) come and go and with them people scatter to the four winds.

The same is true of the flats in which those who pay rent live and never know when the next neighbour will move in or out. The fluctuations are high, but the constant, the basis, it dissolves into nothing. The attempt to create a stable basis in a world where nomadism has been abandoned and everyone is forced to take on a nationality and accept the borders of countries, reveals the paradox of a cemented existence, which on the one hand restricts the freedom of the place of residence, the lifestyle, and on the other hand promises individual freedom through massive advertising and cinematic visualisation.

Thus, the individual is either very clearly or subliminally aware that although he should feel like an individual, any individual peculiarity, deviation and otherness will immediately put him at a social disadvantage insofar as he does not function, or even only appears to no longer wish to fulfil his collective function.

However, it is not the small family or tribal community that supports this collective sense of "we", but the large anonymous Internet world "community", which of course is not such. It is like the thought of a warming campfire, but it is not warming because it is just a thought.

Parents are at a loss because they themselves were born into such a society.

Unless they know that their life has gone exactly according to plan, which nobody has done, but in which everyone has participated, the illusion of freedom and individuality may hold.

But in crises like the present one, there is a deep-seated fear and the habit of obeying the collective. So in principle there is no true kindness between two individuals if there are not a hundred others around them capable of friendship and reliable relationship.

Forty percent of single households in a country speak a clear language.

One can no longer imagine an even stricter separation, but as we see in films, this can certainly be increased. It is the separation to oneself, to one's own existence as a person of integrity. The person is denied to know how he should feel if others do not tell him: the experts (placed by governments or corporations) or the swarm. Who no longer even knows whether he is healthy or sick when the external world does not tell him. And it does it faster and faster and more and more strictly, more and more superficially. So feedback becomes an all important matter, because what is being fed back does not basically meet anything sovereign.

Having said this, on the other hand I think this crisis/climax maybe also is a chance. What do you think?

I agree with your views and I appreciate your taking the time to elaborate on your comment.

I grew up in a small town surrounded by similarly small towns where people usually were born, made a living, had kids, passed on whatever knowledge or skill they had to another generation and died without ever having left their towns except for intermittent forced absences and/or vacations.

Everybody knew almost everybody. With that kind of background it is hard to move to cities, then visit other countries, and see the urban dynamic, the isolation, the routines. Social media has only magnified that isolation under the paradoxical illusion of making you one with the whole world because now you can meet people from anywhere and learn about "different" cultures, etc. All this cultures have already gone through the same process of homogenization in the name of globalization, and except for the exoticism of a picture here and there, there isn't much newness that we can actually experienced because we are stuck to a device to begin with.

I recently interacted with someone here on hive who has never been able to own a pet because he's always moving, always renting, traveling. For many people this has been life, and in many countries the emerging generation of professionals have this as a desirable future. The new jobs do not offer you stability or freedom.

And, of course, you will always have some therapy available or rehab in case you crash. As you say, the feedback we need comes from external sources that will not tell us anything we would not discover ourselves if we try. Instead they will give us more of what the system needs to keep us playing.

I am not sure about the changes that can emerge out if this crisis; whether societies will take advantage of the chance to change what must be changed. I think that people can operate changes at the personal level and if enough people do the same, then we'll see more drastic changes for the better, but usually these changes are channeled through agents who do not always represent people's best interest.

Thank you for your answer.

Same here. That's an imprint which lasts a lifetime, I guess.

And although it is true that a village that refers too much to itself and can become a hostile place for strangers, transients, eccentrics and exotics has a certain homogeneity, it is precisely this uniformity and permanence that makes it an important base for those who have left and those who occasionally return home. Communities which, despite their rather moderate way of life, welcome the fact that carnival is held once a year, which look forward to news and travellers who come from far away, which welcome the novelty from outside, have, in my opinion, found a lifestyle which is worthy of imitation. A community must not be too closed, but neither must it be too frayed.

But as you have said, and as I can deduce from this and add my observations, even small communities are deprived of their own special identity. Through globalisation and a world idealised by the media, in which all people think, do and say the same thing. Yes, even wanting to look the same, whatever that might be. Their special local, seasonal products such as cheese or certain crafts: they now exist in the imagination and are imitated by large corporations and productions. Advertising is always looking for the one thing, the special, the exotic and makes it work for itself.

The dumbing-down of language does its part.

Yes, a play it is. Sometimes I hope to maintain a more humorous way to look at this modern life as a theatre play. In order not to become a grim and despaired person, to which I sometimes tend the older I get. I work with humour when I am face to face with people. One can become creative and irritate humans in a good way.

Yes, in a personal way this crisis is a chance to confront with the deeper realms of the inner life. Even to think of a "society that shall change" is already a trap we like to run into, for the great politicians and other game changers always talk about the "big things" like "nations" and "the world". That's why everything with worth & pride carries the term "world" in its name ;-)

So good, talking to you. Feel sincerely greeted.